TL;DR

… and yes, doing a lot of tasks are killing me slowly, that is why I need your help. Please!

Let's start with my problem

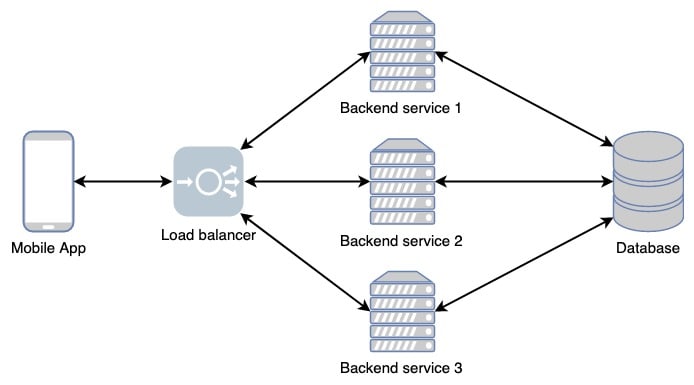

Imagine that I create a new mobile application. My system consists of frontend and backend service, communicate using the REST API. This is my system architecture.

For the first time my product lunch to market, there are only a few users that use it. My backend service still can perform well to handle the request as expected. My customers happy because the application blazing fast.

Now the users grow as I add more features to my application. My backend service handles more requests than before. The request growth starts to impacting my backend service, it leads to performance degradation. My backend service also down because it cannot handle all the requests. It means the availability is degraded too.

The user starts complaining about the application because it becomes slower. They not happy and me too as I afraid of losing users. What can I do to improve my backend performance? What can I do to increase its availability?

There are a few ways to achieve it. It can be revisiting the application algorithm. It can be to change the programming language. It can be to add more resources to the server. It can be to add more instances of the backend service. You named it.

In this case, I will choose to add more instances of my backend service, because I have enough time to rewrite the backend service.

Introducing Load Balancer

As I want to create multiple instances of my backend service, I need a mechanism to redirect requests from my mobile application to the instances. Here I used the load balancer. As its name, the load balancer is used to share the workload between the backend instances. Here my architecture will look like.

The load balancer has its own algorithm to share the workload. There few algorithms I can choose like round-robin, weighted round-robin, least-connection, and others. I can choose the algorithm that fits with my needed.

Using this scenario, now my backend service will handle fewer requests than before. Imagine I have averaged around 6000 requests per minute that handle by a single backend service. Now I can share the workload to three instances, mean that one instance will handle 2000 requests per minute. It will help me to increased performance and uptime.

There is some load balancer technology such as HAProxy, Nginx, and Cloud Load Balancing that we can use. You can pick whatever you want.

Only handle that case?

Evidently not! Load balancing does not only handle the case where I want to handle a high workload. So what other?

Migrating from VM to Container

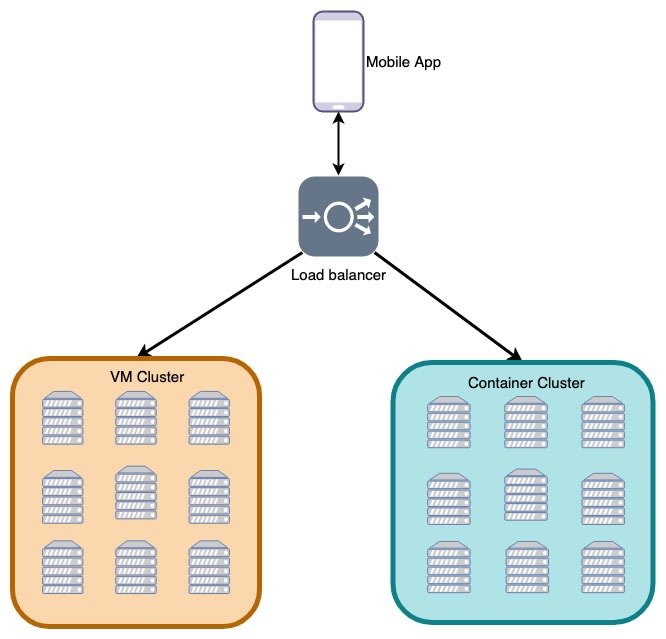

Let’s imagine again that I have services that run on the virtual machine. But know I want to migrate all my deployment to containers. Sound easy because I only need to redeploy all my services using containers. After that, I redirect all the requests to the container cluster. But is that a good practice?

That approach is too risky. Imagine that there is some deployment on the container cluster that not configured well. It can cause downtime. So how can a load balancer help me?

Because moving directly to the container cluster is risky, so I can moving partially. After deploying all service to the container cluster, I want to test it first by sending some requests to it. I can use the load balancer to share the load between the VM cluster and the container cluster. I configure the load balancer to share 20% of requests to the container cluster and 80% of request to VM cluster. Using this approach, I can monitor my container cluster to make sure it configured well. By the time I can add more request to the container cluster until I am confident to use the container cluster totally.

Migrating from Monolith to Microservice

This case similar enough to migrating VM cluster to container cluster. I risky when I migrate monolith directly. A load balancer can help me to migrate part by part. I can use a load balancer to share requests between my monolith cluster to my microservices cluster. I use the load balancer until I am confident that the microservices already work well. Then I migrate totally.

What did we do at Gojek about it?

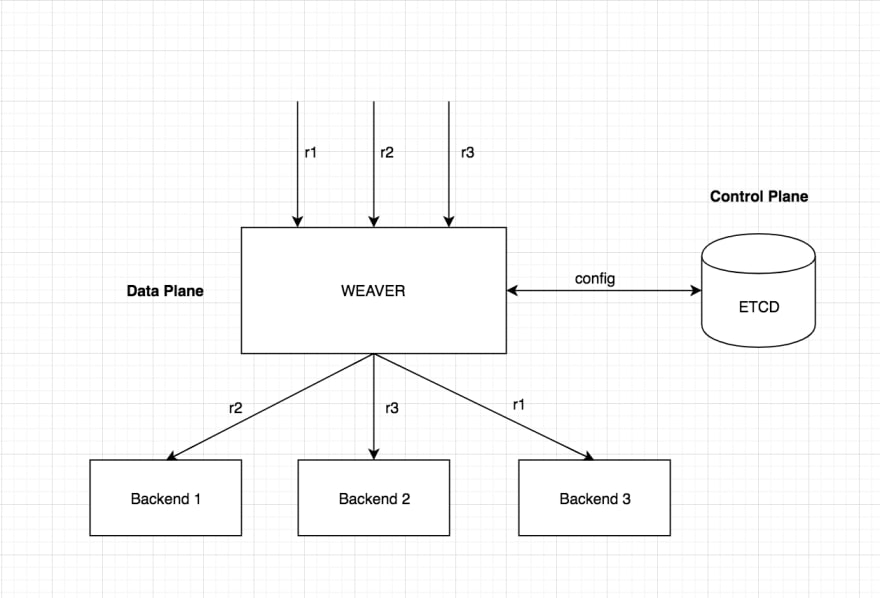

Here at Gojek, we also create our in-house load balancer, named Weaver. It was an open-source project that you can find here.

Weaver - A modern HTTP Proxy with Advanced features

Description

Weaver is a Layer-7 Load Balancer with Dynamic Sharding Strategies It is a modern HTTP reverse proxy with advanced features.

Features:

- Sharding request based on headers/path/body fields

- Emits Metrics on requests per route per backend

- Dynamic configuring of different routes (No restarts!)

- Is Fast

- Supports multiple algorithms for sharding requests (consistent hashing, modulo, s2 etc)

- Packaged as a single self contained binary

- Logs on failures (Observability)

Installation

Build from source

- Clone the repo:

git clone git@github.com:gojektech/weaver.git

- Build to create weaver binary

make build

Binaries for various architectures

Download the binary for a release from: here

Architecture

Weaver uses etcd as a control plane to match the incoming requests against a particular route config and shard the traffic to different backends based on some sharding strategy.

Weaver can be configured for different routes matching…

You can read why we create it as we know there is some existing load balancer technology that we can use.

Weaver: Sharding With Simplicity. Unveiling Weaver — GOJEK’s open-source… | by Rajeev Bharshetty | Gojek Product + Tech

Rajeev Bharshetty ・ ・ 6 min read

blog.gojekengineering.com

blog.gojekengineering.com

Sharding 101: The Ways of Weaver. A guide to deploying GOJEK’s… | by Gowtham Sai | Gojek Product + Tech

Gowtham Sai ・ ・ 7 min read

blog.gojekengineering.com

blog.gojekengineering.com

I hope you enjoy reading it!

~CappyHoding 💻 ☕️

Top comments (4)

Great article, I learned a lot of mistakes you've encountered. There are small typos though(not being grammar nazi). Overall, I hope you can completely migrate from monolithic architecture to microservices.

Thank you, hope you enjoyed reading it

Great article. I've learnt a lot from this.

Thank you, hope it will be useful for you