I came across connection pooling recently when I was working on a large scale microservice in Clojure and realised the HUGE impact it has on performance even when you are talking about serving a couple of hundred users.

Having worked a lot in backend why didn’t I know this before? Maybe Django (my favourite backend suite) had abstracted it away in it’s ORM or I didn’t work in codebases where performance was paramount. 😛

But first what is a connection?

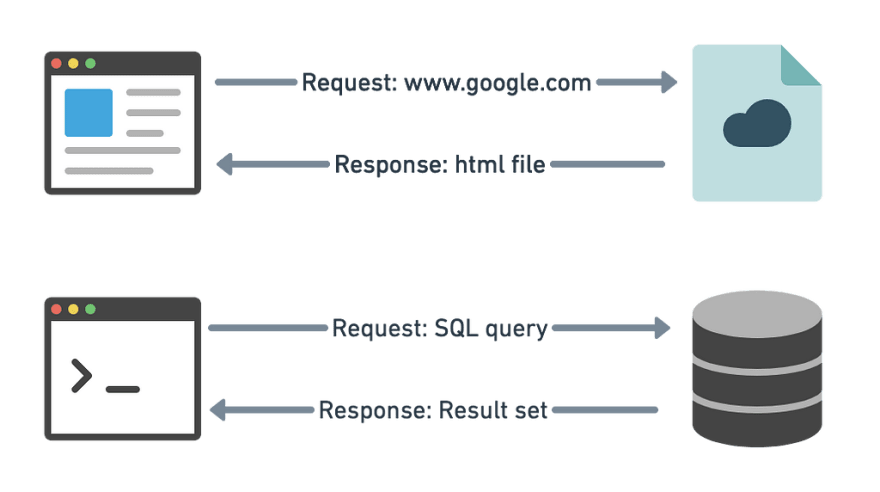

A connection can be formally defined as a way for a client to talk with the server. It is required to send commands and receive a result.

For example, a browser talks to your favourite website using a TCP connection. Similarly a database connection lets you issue commands and receive answers in the form of a result set.

Connection pooling can be applied to any connection like a TCP connection, database connection or even a Redis connection. But in this article, I will be taking focussing on examples about database connections.

What is the problem with normal connections?

Assume we have a Postgres database and you want to run this query on it.

select chairs from dining_table;

To get the result, along with this query we need to provide the database details and our authentication details, usually the host, port, database, username and password. These are needed because before actually running the query, the program has to:

→ find the database

→ connect to it

→ authenticate our connection

→ use the given db

→ and then run our query.

Once the result is returned, the connection is closed. When we want to run a different query we need to start over again.

As you can see we this is time consuming. Authentication is a very expensive process and most of the times creating connections take relatively longer compared to the actual query they run. So it becomes very inefficient for us to create a connection for every query.

So instead of closing the connection, keeping it active and running all the queries using the same connection gets rid of this creation latency. But in a service, how do we share a connection between different clients? That is where connection pooling comes in.

Connection Pooling

Connection Pooling alleviates this problem by creating a pool of connections at the start and keeping them alive (i.e not closing the connections) till the end. Whenever some part of the application wants to query the database, they borrow the connection from the pool and once they are done with the query instead of closing the connection, it is returned back to the pool. As simple as that.

Using this manner, even a small number of connections pooled can serve a large number of requests as the overhead of authentication for every request is eliminated.

There are a lot of amazing connection pooling libraries out there like HikariCP, c3p0 etc. Most of these allow you to configure things like min-thread, max-thread, idle-timeout to make the connection pool suit our needs.

Performance Gains

For you to actually see how much of a difference connection pooling gives, I decided to run a simple select query multiple times in two ways:

- Opening and closing a connection every time to execute the query

- Keeping an active connection and running the query in a loop

I wrapped these two commands in a shell script to benchmark it and ran the script.

The output is shocking indeed. Though both the commands run the same query 100 times, the former loops externally in the shell causing the script to open and close a connection with Postgres every time to get the result.

While the latter opens a connection at start using your authentication credentials and keeps it alive till you close the shell. Every query that is run inside the Postgres shell uses the existing active connection so there is no extra latency other than the time taken for query execution. Thus using the active connection gives you a performance boost of 77x.

Conclusion

To conclude, connection pooling is quintessential to improve a backend service’s performance. It is not only limited to use with database connections but is also used widely in pooling TCP connections when it comes to interacting with other services.

Top comments (1)

so whats the advisable pool size?