This is the third part on a series of posts where we explain Kubernetes concepts using a Theme park analogy.

On the first post we covered the basics of a Kubernetes cluster.

On the second post we talked about scaling, affinities and taints.

On this post, we will talk StatefulSets, Persistent Volumes and Headless Services.

The cloakroom and the cloakroom service

To improve the KubePark's experience, you decide to provide a free of charge cloakroom service. You use the same fun ride template to inform your crew to build one cloakroom in the park, you add a new wayfinder colour for it, and visitors happily start using it.

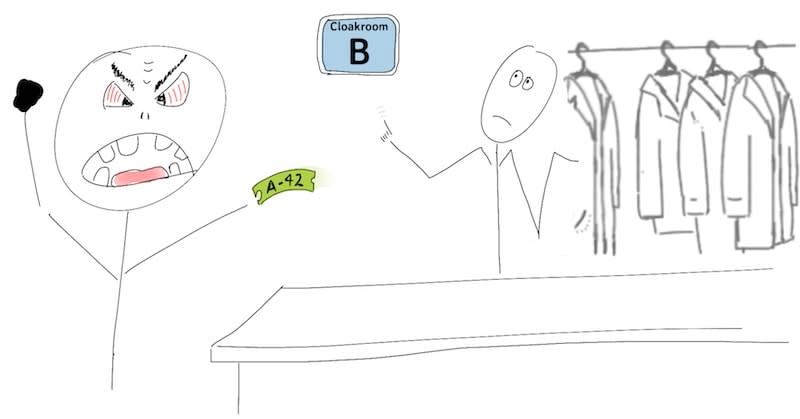

Complains about lost clothes don't get long to start. Investigating what is going on, it doesn't take you long to realize what is going on when you see your maintenance crew replacing the faulty cloakroom:

Maybe the idea of burning everything to the ground was not without its flaws.

Similarly, when the parcel with the cloakroom becomes unavailable, either due to some flood or because your control crew is reducing the number of rented parcels, all the clothes in that cloakroom are left behind. More lost clothes!

This is obviously not good publicity for KubePark, and your landlord doesn't waste time offering his self storage service (k8s persistent volume), built in a nuclear shelter, as a solution.

The only thing that you will need to do is specify in the cloakroom's plan how many storage units must be rented and your control crew will take of doing all the paperwork (k8s dynamic persistent volume claim).

As the self storage is outside the park, the cloakroom's crew will run to the storage unit to leave and retrieve the visitors' items, making the whole service slower, but at least the items will not get lost.

Problem solved! At least for a short while …

Scaling your cloakroom service

As KubePark gets more popular, the queue to leave the clothes starts to be unbearable. You panic: there is just one key per storage unit and the key cannot be duplicated. Increasing the number of cloakrooms will not reduce the queue!

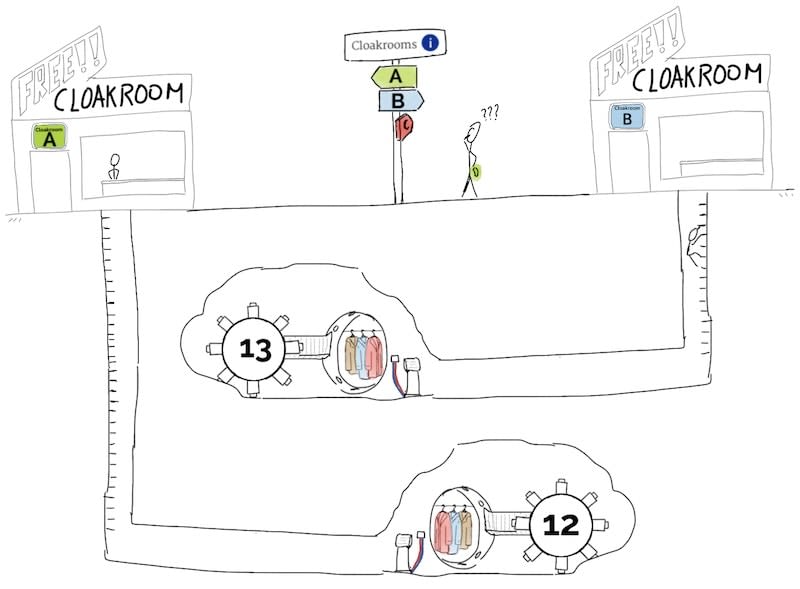

Each cloakroom must have its own storage unit, but this brings a whole new set of problems.

First, visitors will need redeem the cloakroom's receipt in exactly the same cloakroom that they received the receipt from.

Second, if a cloakroom must be moved or replaced for maintenance, the replacement must keep the same name and storage unit key as the cloakroom that it replaced:

- The same name so that from the visitors point of view is the same cloakroom.

- The same storage unit because if not, the storage unit would be empty and items stored in the previous storage unit inaccessible.

This is so complex that it deserves its own attraction-like template (k8s statefulsets, note that StatefulSets also come with some additional ordering guarantees).

Third, remember that the coloured wayfinder takes visitors to any attraction that matches a tag, but here the visitor must go to the receipt's cloakroom, so coloured wayfinder is of no use. Even if more cumbersome for visitors, you end up installing a sign posts (k8s headless service) with the name and direction of the cloakrooms, so that the visitor can find by herself the appropriate cloakroom.

Now KubePark can have hundreds of cloakrooms. A dream come true.

Is that all?

Almost! There are still some additional concepts to be aware of, but I don't feel they need a KubePark analogy:

- Namespaces, like the usual file system folders.

- DaemonSets, run a pod in each and every parcel, current or future.

- Secrets and Config Maps, equivalent to configuration files.

- Jobs, one-off tasks.

- CronJobs, a job with a cron.

- Priorities, to decide what attractions are more important when scheduling or running out of resources.

- Init Containers, to prepare the ground for a pod.

- Replication Controllers, obsolete, replaced by Replica Set.

- Replica Set, you can consider it an implementation detail of Deployments.

- Disruptions and rolling update, how many pods can be down at the same time?

- Kubelet/Scheduler/Master Controller/API server: k8s internals.

And of course, there are still a myriad of details on each of the concepts that you will need to be aware of, but I hope that you are a little bit less lost in the Kubernetes world.

Enjoy your Kubernetes ride!

Top comments (0)