You may be using dbt CLI or dbt Cloud for your data pipeline work to Extract, Transform, and Load data into a warehouse by creating dynamically architected databases on a scheduled basis. As a fledgling data testing engineer, I learned a few critical things about using dbt native tests to validate dynamically created schemas, sources, and models within the dbt data architecture framework.

Let's take a look at four big facts I wish I knew when I got started with dbt testing. We're only covering the basics of testing a model and its sources using dbt's data and schema testing. In the future we'll look at testing other parts of the dbt architecture.

1. Schema.yml File Can Only Be Present in dbt's Models Folder

If you start with a basic schema test in dbt it's good to know you can't move the schema.yml file to the Tests folder. I was curious if this worked, but it does not. Schema tests are great because you can create something like a not_null or unique test that checks one of your columns for null values in a matter of seconds. It's easy to write this kind of unit test just to check columns inside your models.

Check out this example of some model schema tests:

And this is a look into source schema tests:

2. You Have to Think "Backwards" for dbt Data Tests

This is one that really got me at first. There is a strict rule in dbt data tests that says they must return zero rows in order to pass. Instead of searching for a value such as the sum of a specific set of rows, the data test must be written in such a way that it expects to find zero rows if the results are not equal to the correct sum value.

Think "backwards" when you're writing a data test: how can I return 0 rows in this case, yet still check the number I need to validate? Generally you'll find that != or <= work in most data test cases. I've included an example here to show a data test that makes sure there is a row count in an example Users table created by a dbt model.

3. Increase Your Thread Count for Maximum Test Speed

Time matters in automated testing, and the goal of writing dbt schema and data tests is to be an integrated part of the data architecture. This way every time the developers run their models, the tests are ready to go and provide checkpoints during development. That's why timing is so critical. If a dbt model takes an hour to compile, you do not want to add 30 minutes of testing to the run.

Increasing the thread count value in the profile your project is using, within the profiles.yml file, can help immensely. Minutes become seconds, and seconds become half-seconds, the more threads you can add. For example, if I have 30 tests, I may want 40 threads defined in my profiles.yml file. This helps run 30 data and schema tests in 4 seconds, as a quick example in my experience. So, increase that thread count!

4. Give Your Tests Meaningful Names

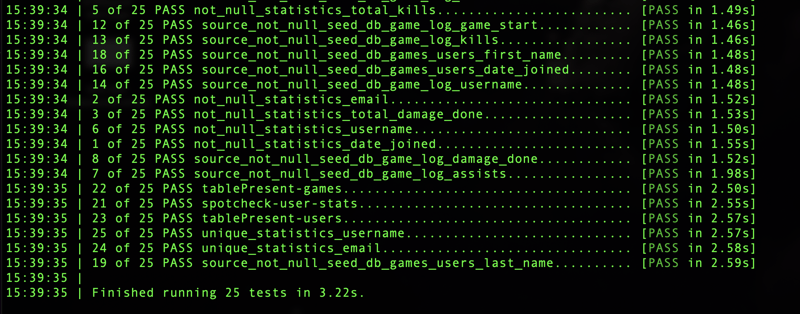

This is important because it will be hard to distinguish which test passed or failed while the tests are being executed if your data test names are unclear. When you run 'dbt test' all your schema and data tests will run together. You cannot simply run one directory within the data tests folder, you may either use 'dbt test --schema' or 'dbt test --data' and that is really the only way to differentiate which tests you'd like to run.

Point being, because dbt doesn't give us much control over running smaller test sets, we need to be able to see all the different names of the tests that are running. As you can see in this picture of some dbt tests running, dbt will automatically name the schema tests, but you can label your data tests with useful, consistent names such as "tablePresent".

Outro

Nice job, we made it to the end! I hope these quick facts about dbt testing are helpful for you. If you'd like to see the code in action, please feel free to clone my dbtTestExamples repository on Github and learn how to connect a dbt model and tests to a Google Big Query instance.

Happy Testing!

Top comments (0)