Not long ago in my team, we completed the migration of all our services to .NET Core. Of note was a mission critical full .NET framework legacy Windows Service and in this post I would like to share how we completed that migration.

We established 3 simple constraints to meet during this migration: changes should be safe (i.e. not break existing behaviour), incremental (keep the changeset small enough to be reverted easily if needed and not do a Big Bang Release) and non-blocking (should not block critical business features), all the while making sure that the team is aware of the effort and can easily pick up the thread at any point!

In order to meet these constraints we decided to employ the Mikado technique which allows you to scope work out and control risk better than just doing a BBR.

What is Mikado Technique?

The key philosophy behind Mikado technique, is to identify the goal of the refactoring first for e.g. Migrate the app to .NET Core . Then making the most obvious change that will lead to that goal for e.g. Change the target framework of the project to .NET Core and then see what fails to compile or if the tests fail. If there are build errors for e.g. some third party package is incompatible with .NET Core, then you’ve identified things you need to do before you can change the target framework. You then revert all the changes up until this point and start by fixing the issues reported in the build errors first for e.g. find a compatible package version and install that!

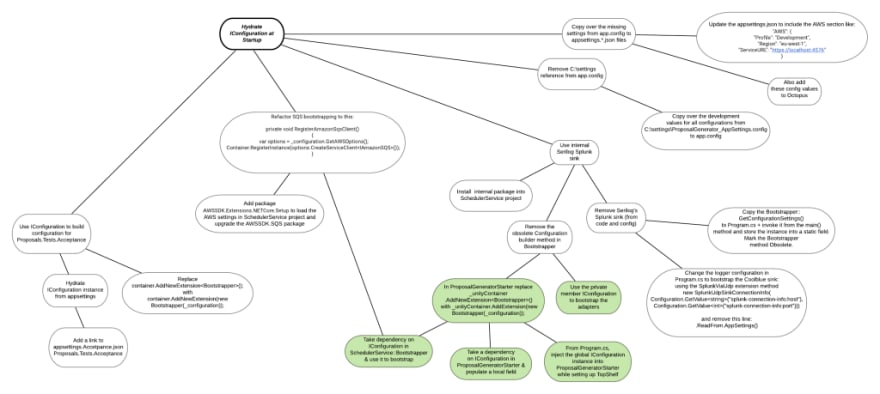

Repeat this process and at each step identify dependencies and arrange them in a graph structure as nodes until you reach leaf nodes i.e. nodes that don’t have further child nodes (dependencies).

You then start the actual refactoring at these leaf nodes and work your way up to the ultimate goal. At each step you should run your tests and if everything still works, mark the node(s) as done and commit the changes to the source control ( incremental ). You should also be able to deploy these changes at any time without breaking the existing behaviour ( safe ) or revert the change if a business critical feature takes priority ( non-blocking ).

Important thing to keep in mind that the Mikado graph also serves as a communication tool in addition to a refactoring tool, the artifact of the process is a graph that captures changes to be made in order to reach the ultimate goal. Your team can then use this graph to pair or mob program or even just pick up where you left off. It also gives a good indication of the progress on a refactoring effort thus reducing the “holiday factor” (or “bus factor” if you must be dark and grim).

How much detail you put in individual nodes is up to you, important thing is it should communicate the change clearly to your team members (and yourself). In some cases, we also added code screenshots into the diagram to help reference key pieces of code. Whatever works for you!

Migrating to .NET Core

Because in our case doing this with just one Mikado graph would have been akin to a BBR, we decided break up the effort into 4 distinct phases with each phase having its own Mikado graph:

- Consolidate external package dependencies across the solution : fairly isolated job, package dependencies can be upgraded or consolidated. The advantage of separating this into its own phase is that breaking changes can be addressed with a bit more peace of mind that nothing else outside of this has been changed so if anything does go wrong, the revert surface area will be small.

We took a very pragmatic approach here, that of retaining what might be considered “old fashioned” libraries, as long as they were compatible with .NET Core, for e.g. Unity DI container and TopShelf. Just because there might be shinier packages available is not a good enough reason to switch. It introduces unnecessary risk and increases the scope of the work and goes against both (safe) and (incremental) constraints of such an effort.

Once the .NET Core migration is done, we might very well plan in another scoped refactoring to migrate to more modern libraries but that will be a Mikado Graph of its own. You see how this technique can help control risks by scoping?

- Simplify and unidirectionalise inter-project dependencies : we drew out the dependency diagram of all the solution’s projects which helped us see the spaghetti mess a lot more clearly. Many of the projects not only had a direct reference to another projects, but also transitive references to them. Several other projects had independent references to 3rd party packages, for e.g. Newtonsoft.Json was referenced in multiple projects which could easily be referenced by a shared project instead. This makes upgrading these common packages easier because they are all in one location.

-

Migrate from

System.ConfigurationtoIConfiguration: swapping out the configuration system was probably the most tricky part because it did require changes throughout the solution. The service follows Ports and Adapters architectural style, so each adapter has its own configuration that it hydrates from the configuration system at bootstrap. This was also a phase that had a high failure impact, because you usually don’t catch configuration errors until its too late. So we extensively tested this part by: writing automated verification tests and running the service locally before merging the changes.

In the Mikado graph sample shown below, you’ll notice that its made up of several sub-graphs and some of the nodes have common dependencies. This is usually an indication of design flaws in code, but solving that one dependency unlocks two other branches. We also decided to tackle these sub-graphs in an order that made sense for us, for e.g. we picked out the most isolated ones first and then worked towards the ones that have a bit more impact. Any additional refactoring that was necessary to make forward progress, we did it!

-

Upgrade target framework to .NET Core (for executable projects) and .NET Standard (for library projects): probably the least painful part of the whole exercise because we did 1 to 3 first so this this was just about changing the target framework moniker from

net461tonetcoreapp3.1.

An example graph for phase 3 looked like this :

All throughout each phase, we tested the changes locally, constantly pushed to the main branch and CI/CD-d into production. Once deployed, we did several test runs in a pre-production (Acceptance) environment to make sure that no runtime exceptions or crashes sneaked into the hosted environment. Because our service only runs between 8AM and 10AM, it gives us a bit of breathing room outside of these hours to run in reduced availability mode if we need to and test stuff out. If we had higher availbility requirements, then meeting the safe and incremental constraints of the effort becomes even more crucial!

The end results of this process were:

- Not one Bing Bang Release

- Team was fully aware of what’s already done and what’s remaining

- We completed the migration within the planned timeframe

- Changes were always safe and incremental

- We migrated the service to .NET Core without a single incident.

After this service, we also applied this technique to other services that were relatively simpler to migrate and ended up with the same results. Bottomline: migration completed well within the timeframe without a single outage.

If you haven’t tried Mikado technique before, I would highly recommend it!

Top comments (0)