If you're writing code for one of the many companies out there that care about security there's a chance you heard these acronyms before. If you did not, I'll prepare you in advance for when that happens and try to explain as well as I can what they mean and what they do.

What's AppSec Testing?

Application security testing is the process of looking for potential security vulnerabilities in applications. The purpose is to identify them as early as possible in the SDLC, to reduce the remediation costs. Patching issues for an app in production will always be much more expensive than fixing something when you write the code. There are several approaches that people employ to handle this problem. In this post, I'll do a quick run through them. If there's interest in this I'll do a follow-up with pros and cons.

Penetration Testing

Despite what by some could be found as a funny name [I often get some giggles from the audience when pronouncing this one for some reason], this is one of the oldest methods of testing applications. OLD != bad

Pen Testing is usually performed by one or more ethical hackers, that will try to infiltrate a system. When directed to applications, this could start:

- No Knowledge of the app - BlackBox Testing

- Some knowledge of the app and how it works - GrayBox Testing

- Complete knowledge of the application and access to code, credentials, etc - WhiteBox Testing

_Remember these terms, White, Gray, Black, they apply to other areas of security such as White/Gray/Black Hat hackers, and even other AppSec testing techniques.

The main benefit of Pen Testing is the thoroughness you can get, but they can be slow and good hackers don't come cheap.

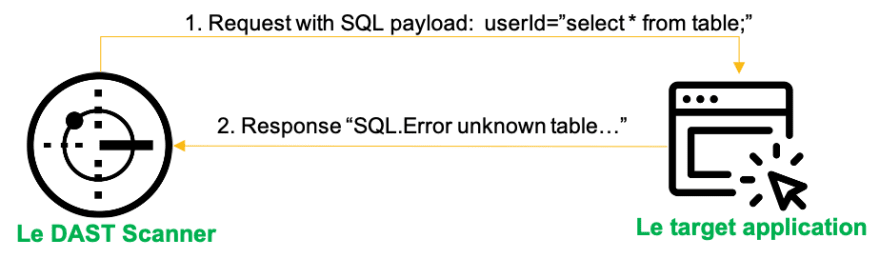

DAST

Known as, Dynamic Application Security Testing, this is the oldest form of automated security testing.

The story goes that a long time ago a Web Application Firewall* company was looking at ways to prove the value of their product. The *WAF is supposed to block external attacks. To show the value of their tool, some engineers wrote a little tool that would find some of these vulnerabilities in web applications, show how they can be exploited and then deploy the WAF to show how effective it is. Well, during one demo a customer said, "You're WAF's all nice'n all, but what about that other tool that finds the issues? I want that one."

This is how DAST came to be. It is a BlackBox (some would say Gray at times) scanner, that can test Web Applications and APIs. The important thing to understand about that it's that it behaves like a "hacker in a box". It contains a series of tests, that can reveal potential weaknesses in the tested applications. The testing is request based, meaning that the tool will first map out your app or API using various techniques and then will send a LOT [and I mean A LOT!] of tests to the targeted app. Based on the response of the App, the tool can then decide if there's something to be worried or not.

The main benefit of this technique is that it works for most web applications (mostly HTTP focused though), the findings are fairly accurate and can test both production and staging applications.

On the downside automation is possible, but because everything is request based, this is the slowest form of Automated testing. With modern cloud hosting for apps, there's at times a need to notify providers that testing is being done, so your tests don't get sucked into the cloud provider's firewall. Pen Testers also leverage DAST to do quick checks of the apps before they start going for more advanced testing techniques. Developers are not super excited about the findings because they don't directly point to the vulnerable line of code or construct.

SAST

The next technology that came to market was Static Application Security Testing, which abbreviates to SAST.

SAST is a white box scanner. The SAST tools look at potentially dangerous patterns in your application code, bytecode or binaries, which will be used to highlight findings that will be of interest. (Eg. User input gets appended to database query unsanitised). You probably noticed I said "potentially" and "highlight findings". The reason I phrased things this way is because SAST is mostly theoretical analysis. It's not the testing DAST does where it sends a request, it mostly analyses code patterns.

When reviewing the "code"[code, bytecode or binaries] SAST tools will lack the context of where the application is running or what it's trying to do. This means that a finding that can be a vulnerability for some, could be intentional behaviour for others. One example would be if you're writing a banking app, you don't want to allow customers to run their own SQL queries. If there are patterns that show this behaviour as possible, the tools will flag them as SQL Injections and rightly so. However, if you're writing a back-end tool that allows you to easily work with SQL DBs and build your own queries, this behaviour is correct, but the tool will not be able to tell you the difference. It is up to you to decide if a "finding" is a vulnerability or not.

However, the main benefit of this technique is that once you go past the initial hop of deciding what's of interest to you, it is very easy to automatically your applications during build or even from the IDE. SAST tools also integrate with many tools regularly found in development so using them it's usually just a case of setting up some connectors before we get started. A lot of developers also like the fact that it points directly to the suspect line of code or construct.

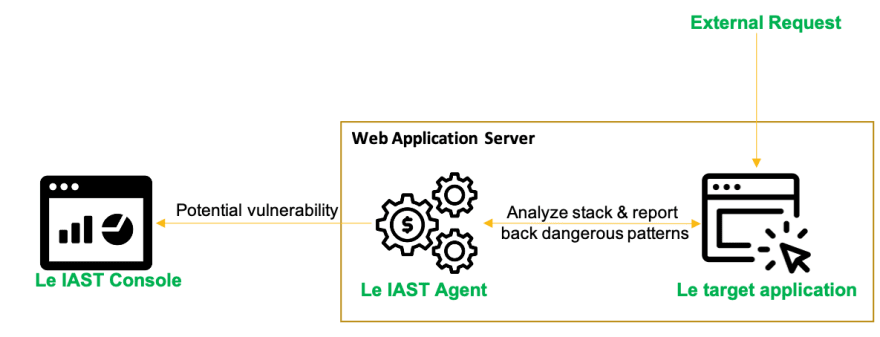

IAST

Interactive Application Security Testing IAST, it's an interesting one. It is a hybrid between Static & Dynamic of sorts, while at the same time it's not quite testing, it's more an analysis of sorts, like SAST. You can see this one as a grey box testing technique. This tool has been out in the market under various forms for a while now. The first iteration of this was used very closely tied to DAST. IAST agents would be deployed on application servers, and when a vulnerability was reported by the DAST scanner, the IAST agent would return the stack, files, line number to help you link the DAST issue to the code. A nice addition to DAST, but the scan times were quite long due to the nature of DAST.

Modern iterations of IAST work similar, but there is a significant difference. There is no longer a need for a DAST tool to trigger the analysis anymore. Modern IAST agents can simply de deployed on an application server and during functional, QA and other types of testing, it can see the data passing through the application and makes a decision if that pattern followed by the data is dangerous or not. This is pretty similar to the logic used by SAST tools. If data comes from the user and gets appended to a SQL query, this could be dangerous. As long as there are requests that trigger the application to perform various actions, the IAST agent can investigate them and tell you if they're dangerous or not.

This technique can be very fast and works great with APIs. However, it's only as good as your functional/QA testing. If your testing is not up to speed, then you will not really get a lot of value from it since you need to trigger those flows in order to be investigated. One important thing to note is that while you might think, "Hey, I can run this in production and see if something's wrong there" keep in mind that the results will usually show you the requests that cause this issue. Using this in production environments could easily breach various privacy laws.

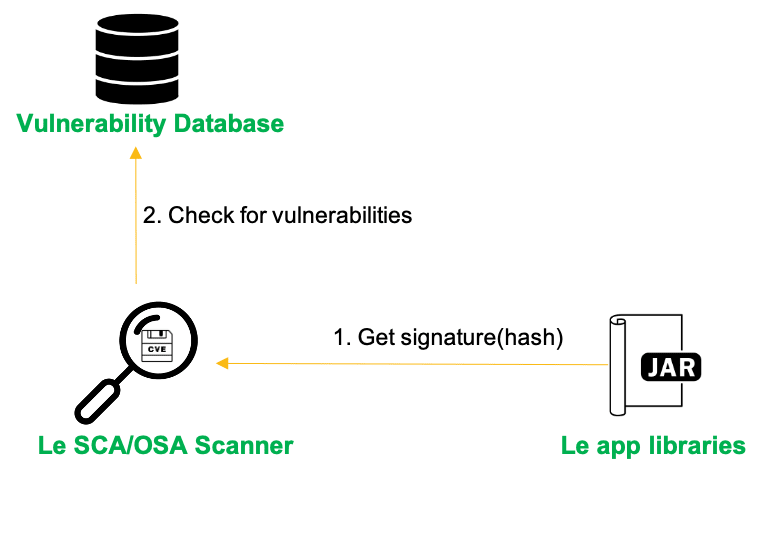

SCA/OSA

Software Composition Analysis (SCA) or Open Source Analysis(OSA) talks about testing 3rd party components/libraries used in your applications. I'd say this is a pretty straight forward testing approach that a lot of people understand. Vulnerabilities show up in 3rd party libraries all the time. As researchers find more and more vulnerabilities, it's hard to keep track of all your libraries and review the security bulleting for all of them. Most time as a developer you'll find something you need to use and import it with your dependency manager of choice. To solve this, OSA will check a lot of security bulletin databases, some public some private, and let you know of any potential vulnerabilities for the libraries you are using. A popular one you might want to check to see how these things look like is NVD

The benefit of SCA/OSA scanner is that they are very fast and they can keep you up to date on the latest vulnerabilities for the libraries you are using. What's more, some tools will even help you move (some pretty much automatically) to a version with less risk/vulnerabilities without you having to do any code reforging to cope with new/deprecated APIs.

Another benefit of this technology is that such scanners can check the licenses attached to each library. This will make it easier for you to stay out of trouble for using a library with a license that could have a negative impact on you. There are some approaches here, some do this as a stand-alone scan, some do it at the same time SAST, some even check if you're actually using the component reported as vulnerable. There are quite a few tools with cool features to choose from.

End of

So, to summarise I'll use the car service approach:

Pen Testing - Intensive servicing work done by the Mechanic

DAST - Mechanic listening to your car to see what sounds off or maybe doing a very quick drive

IAST - Connecting your car to a diagnostics system to see what goes on when you drive it

SAST - Reviewing the car blueprints to see design flaws

OSA/SCA - Checking the parts you put in your car are not broken/poor quality

Top comments (1)

AIAST – An advanced IAST SaaS platform Versatile | Adaptable | Cost-Efficient | Renders you an unmatched comprehensive view of your application’As security posture.

zeroday (zeroday.co.uk/)

franklin@zeroday.co.uk (mailto:franklin@zeroday.co.uk)