Overview

One of the topologies available in a multi-region deployment of CockroachDB is known as Follow the Workload. Utilizing this topology pattern enables Cockroach to deliver fast reads within the region where the bulk of the read requests originate from. This blog will discuss the implementation details and demonstrate how to verify the performance of the Follow the Workload topology.

Follow the Workload is appropriate for a workload running in an active everywhere deployment across geographically distributed regions. A quick review of key architecture implementation choices within CockroachDB will be helpful for this discussion.

CockroachDB Basics

CockroachDB is a massively scalable, SQL compliant, distributed database solution. Data is stored in a monolithic immutable key value store and data in this store is broken up into manageable chunks called ranges. In a multi-region deployment, CockroachDB distributes range replicas across regions to ensure availability and survivability. The Raft consensus protocol will elect one of these replicas as a leaseholder. The function of the leaseholder is to service all reads and writes for data contained in the range. This allows CockroachDB to deliver globally consistent ACID transactions. The Follow the Workload topology enables CockroachDB to optimize the placement of these leaseholder replicas to realize performance gains.

Follow the Workload in Action

In this example, I deployed a multi-region CockroachDB cluster across the United States using 3 AWS regions. The TPCC workload is being generated in only one region and is being load balanced across the nodes in that one local region. This configuration mimics a follow the sun type workload where different regions will see a majority of the workload throughout the day. The database was configured to run at a replication factor of 3 and CockroachDB automatically distributed one replica to each region based on the locality flags set on each node in the cluster. The leaseholders were not pinned to any specific region meaning we will allow the database to choose which region the leaseholder should reside in.

The expectation is that over time the Follow the Workload topology will kick in and that all leaseholders for the ranges being accessed by the workload would move to the region where the workload was being generated. This has the positive effect of lowering read latencies since leaseholders would be in the local data center and not require communication with nodes outside the local region to satisfy the read query. It should be noted that writes will still require communication with a quorum of replicas and incur inter-region latency. Using the command

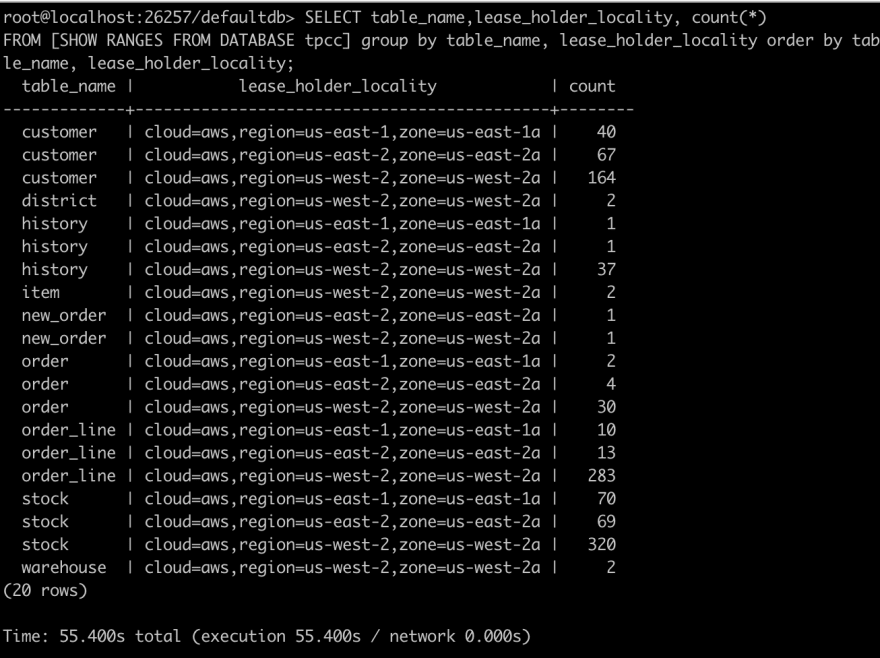

SELECT table_name,range_id,lease_holder_locality, count(*)

FROM [SHOW RANGES FROM DATABASE tpcc] group by table_name, lease_holder_locality order by table_name, lease_holder_locality;

We can see the initial distribution of leaseholders looks like the following

What I observed with the default CockroachDB configuration is that leaseholders would move to the region local to the workload. However, soon those nodes local to the workload were consuming more resources and CockroachDB tried to balance the load across nodes by moving leaseholders back out of the local region. We can alter this behavior by changing the following database cluster settings.

set cluster setting kv.allocator.lease_rebalancing_aggressiveness = 10;

set cluster setting kv.allocator.load_based_rebalancing = 0;

With these settings, I was able to get the Follow the Workload results I wanted. My test data consisted of 400GB of data and after 30 minutes of the steady, random TPCC workload, all leaseholders moved to the data center local to the workload as seen in the chart below.

Running the show ranges command again confirms that all leaseholders did in fact end up in the west region where my workload was running.

There are a couple of considerations worth mentioning if you intend to utilize Follow the Workload. First, keep in mind we turned off the cluster setting to allow CockroachDB to move leaseholders based on increasing load. You need to test thoroughly and confirm that your cluster is right-sized to support having all the leaseholders in one region. Second, since nodes containing the leaseholder replicas shoulder the burden of coordinating read and write requests you will have to carefully monitor that those nodes do not become saturated especially in failure situations where you may lose a node.

In summary, the Follow the Workload topology can be a useful tool to optimize performance for applications where load can shift to geographically disparate regions throughout the day. I hope you found this useful!

Top comments (0)