Follow me on Twitter, happy to take your suggestions on topics or improvements /Chris

I've written this for you that just parachuted into a project that starts on Monday and it's Friday afternoon and you've got 2 hours to spare and you're like crap. I feel you, I've been there countless times. Don't worry, by the time you've read this article you will have more knowledge of what goes on in the code and with Docker and especially what they are talking about in the meetings.

TLDR; this article is meant as a build up for this 5 part series on Docker. The idea is to convey how the wrong mindset can stop you from learning Docker properly. I convey this by telling my Story on how I learned Docker. If you are here to learn a ton of Docker and is not interested in hearing this story, that does contain a few useful commands, then click into the 5 part series as that's probably more what you had in mind.

Still here? Great, story time.

This story aims to give you a high-level view of the most important concepts and what commands are connected to it. I've written this as a set of self lived stories for mine and hopefully your amusement so you see when ignorance slowly turned into knowledge. Some of you might not know an IT career that didn't include containers and Docker, but I remember those monolithic applications and so does Pepperidge farm:

Furthermore, we cover that shaky first week or maybe even the first project in which you are happy to copy-paste docker commands. However, you really should consider learning it for real, especially if you want to write APIs or work with bigger solutions. Have a look at this series I wrote just for that:

5 part series on Docker but for now, let's pretend it's Friday afternoon so keep reading :)

Resources

I end up mentioning some concepts in this article that is worth digging through further:

- A quick overview to Docker in the Cloud If you are reading this you are looking for a primer on Docker but at some point, you need to know how you can keep using it in a production environment. That production environment is likely to be the Cloud at some point

- My 5 part series on Docker

Monday morning - tis but a script

Ok. So you arrive at your new project and you know absolutely nothing about Docker and they tell you to install Docker. You head to this page install link and depending on what OS you have you get different components installed.

At this point, you still don't understand what's going on but you have Docker installed.

To Mock or not to Mock, that is the question?

Your new colleagues have most likely given you a script that is either a long script or a short script. It might look a bit alien but don't worry I got you. First time I got one of those scripts and it looked something like this:

docker run -d -p 8000:3000 --name my-container --link mysql-db:mysql chrisnoring/node

To be fair it was even longer. But even the above command would have been enough to confuse me at this point.

I ran the script and low and behold everything worked. When I say worked it spun up a bunch of endpoints that my frontend application ended up speaking with.

Great I thought. That's what you use it for, to mock away endpoints?

At the time I was very frontend focused and in my universe I just wanted the backend people and myself to agree on a contract, a JSON schema that we could all say yes to, so I could work in peace. Of course, Docker is so much more than that but depending on what role you have in a team it may take you a while to realize its worth.

I've run it and something went wrong

Running that script means it will sooner or later break and when it spits out an error you need to know WHY it breaks. That's what happened to me and that was my introduction to some basic concepts and some very useful commands. Ok, so I don't remember if it was my computer falling asleep or if something just errored out that brought down one or more of my endpoints. It was down and it wouldn't respond. At that point, I was told by my more Docker savvy colleagues,

find out what containers are up and which ones are down. Just restart the failing container.

Of course, I was like a question mark. All I knew was how to copy paste a script in the terminal. At that point I was told by a colleague to just run this command:

docker ps

Then I saw a list like this:

You can also run:

docker ps -a

So you can get more details.

Then just type like this to bring up the container:

docker run -p 8000:3000 chrisnoring/node

I'm like uhu, copying commands to notepad. I suddenly knew how to list some containers and bring up one if it wasn't there. At that point, I was like, whats a container?

Ok so at that point I had learned:

- copy paste, I could just copy paste that longer command that brought everything up

- docker ps, shows all running containers

- docker ps -a, shows all containers, even existed ones and some detailed info

- docker run, brings up a container with a few extra commands like the port and the name of an image

It went on like that for the rest of the project. I mean I was front end focused, didn't feel like I needed Docker.

I don't understand Docker, can you teach me?

I've managed to get through the above-mentioned project on just copy pasting commands, yes it can be done. After all, working pure frontend I didn't feel I needed to know. That can be true for certain cases but generally, knowledge is power.

So years after that I ended up on a project that was all microservices, like everywhere. Docker was used heavily and per usual I started working on the Frontend and then I decided I wanted to write some backend cause that was Node.js and Express and I knew both those techs. At that point, it wasn't enough to just copy paste some commands. I needed a number of endpoints to be up and running and to contain real data as well to mimic the real environment.

Suddenly it was more than just mocking endpoints it was about creating an environment that was like the production environment.

First thing I said was how does this work, how do we spin up our services?

Just type

docker-compose upand you'll be fine, was the response

I was like eeh.. Docker, Docker Compose, same thing, different thing, where is that long script I'm used to, to get everything up and running?

Narrator: there was no long script.

I don't understand what does this do. Why Docker Compose why, not Docker something something, I said to a colleague?

Let's start from the beginning my colleague said. Let's start with Docker from the beginning.

The basics

First thing you need to know is that there is a thing called images, containers and a Dockerfile. You build your images from a Dockerfile with a docker build command. The Dockerfile acts like a recipe for your image, what OS you need, what libraries to install, what variables to use and so on. At that point, he showed me a Dockerfile. Of course, I don't remember exactly how it looked but I'll show you something similar:

// Dockerfile

FROM node:latest

WORKDIR /app

COPY . .

ENV PORT=3000

RUN npm install

EXPOSE 3000

ENTRYPOINT ["node", "app.js"]

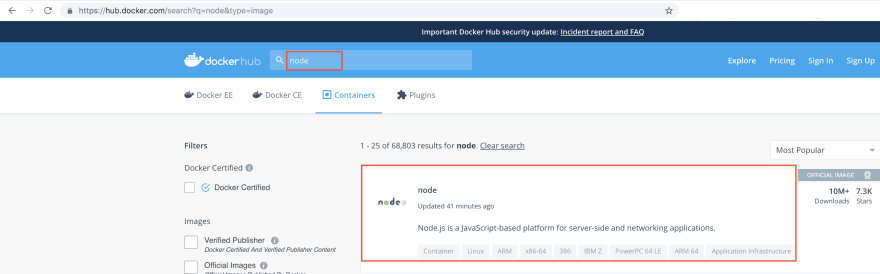

Here you can see from the top how we first choose an OS image called Node, with the FROM command. This is Ubuntu with Node.js installed. It will be pulled down from Docker Hub, which is a central repository:

Next is setting a working directory in the app with WORKDIR.

After that, we copy the files from where we are located to the docker container with COPY. Thereafter we set an environment variable with ENV.

Next up we invoke a command in the terminal with RUN, which in this case install every library specified in the package.json file.

Next, to last, we open up a port 3000 so we can communicate with the container from the outside, with EXPOSE.

Lastly, we start up a program with ENTRYPOINT.

That was the Dockerfile, let's build our image. We do that with the docker build, like so:

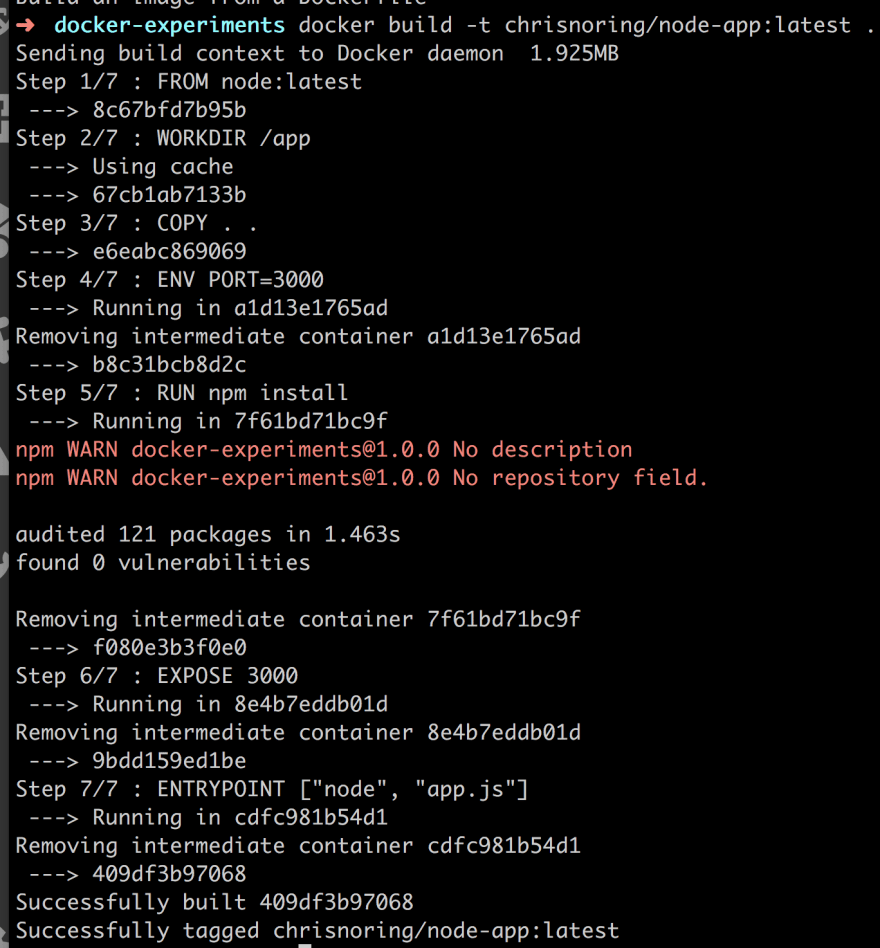

docker build -t chrisnoring/node-app:latest .

The above does two things, the -t tags the image with a name chrisnoring/node-app:latest and secondly we point out where our Dockerfile is located with the ., at the end. So at this point, it reads through the Dockerfile and pulls down the OS and start adding each command as a layer on top of the image. We call this a layered file system. We can see this in action below:

We see two things that point this out, one is Step 1/7. It executes every command in the Dockerfile as a step. Thereafter it starts saying Removing intermediate container.... This means that it creates a temporary container from the previous image layer ( your latest instruction ). Then it starts to remove each of the layers when it's done with them. The last layer is tagged with the name you give it in the docker build.

Run/Stop a container

Ok, now that we have an image we have something that contains all we need, the OS, env files, our app and so on. But we need to create a container from our image. We do that by calling:

docker run -p 8005:3000 chrisnoring/node-app

What the above does is to first create an opening between our side and the container with -p and the syntax looks like -p [our port]:[container port]. Then we say what image, that is chrisnoring/node-app.

This brings up a container that we are able to navigate with from port 8005, like so:

Ok, we learned about docker run, what about starting and stopping?

Well if you already have a container that's up and running you can stop it with docker stop [container id]. The easiest way is to find it's container id with docker ps. You don't need to enter full container id, just the 3-4 first characters. This should bring down your container. At this point, it still exists so you easily start it with docker start [container id].

Docker Compose

Ok, so you taught me the basics of Docker with images, containers, and Dockerfiles. What about this Docker Compose?

Well, Docker Compose is a tool you can use when you have many containers. To use it you need to define a docker-compose.yml. It's where you specify all your services, how to build them, how they relate, what variables they need and so on. You still use Dockerfiles though for each service but Docker Compose is a way to perform things on a group of images and containers.

This conversation carried out over the course of many weeks and I learned a whole lot in the process... :)

Summary

As you can see I was once at a place where I first wasn't using Docker at all. Then I came to use it as just commands I was copy-pasting and finally, I saw the light and realized I needed it. Which lead to this to 5 part guide eventually.

I hope the journey I told has been useful in teaching you what not learning a technology properly can have for consequences and that finally embracing it will mean you add a valuable tool to your toolset. I know many of you will or is probably following my footsteps, to some extent. So please read my 5 part series and I promise you, you will see the light

Top comments (20)

Many thanks for the humor in the article!) Crash courses are an excellent way to improve your programming skills. Thanks to this, a specialist can easily find a way out of any situation or a solution to any problem. Many "writers of code" cannot solve even banal problems because of which the code cannot be compiled. Every programmer should be able to keep calm if something goes wrong. This is a normal situation if the volume of work is large. Stressful situations are an integral part of work. I believe that the best option is to attend professional courses. You recommended great online services for this! Thank! I would also pay attention to essay writing service paperial.com. Writing is always a difficult task for a programmer. But sometimes it is necessary for the presentation of a new product. I think that many will find useful and relevant information in your article.

Thanks for the post. Just one small note.

Isn't it better to avoid using

as container will be rebuilt completely after any minor code change? Maybe it's better to use

?

hey Alexander.. you are right.. That's definitely an omptimization you could do. Just wanted to show something working initially..

Microsoft just released VS Container Tools extension.

Not sure if its for vs only, but it should work for vscode as well.

This is just for Containers, so you still need to manage images.

It's currently in preview, so use caution and report any issues.

devblogs.microsoft.com/visualstudi...

Awesome article really good primer on docker.

Thank you. Appreciate your comment Max :)

This is a fun article ☺️

Nice one mate:)

Thanks for that. Glad you like it :)

This was very helpful, thank you :)

hi Lea. Glad to hear it :)

I've been wrestling with docker almost all day. This might help when I continue tomorrow.

hey.. There is a link to a 5 part series in there as well so be sure to check it out. Let men know if there is anything I can do:)

FInally I've time to get into this now. Somehow forgot to save in my reading list, but found it again!

Awesome article, had this same experience a while ago, thanks for sharing it :)

I thought I was relatively alone in this experience. So thank you for writing that and I appreciate your comment Nilemar :)

I really enjoyed the story selling format of this article! Fun to read, appreciate the primer on Docker.

Please compile it into a PDF document so it stays handy to refer. Thanks a lot.

Thanks, really helpful!

As usual, though, you don't need to know all those docker commands, because we have Portainer!