Let's say you have a project that connects with a third-party API which stores data for your customers. This API only stores data for your customers if your customers consent to the data storage for analytics purposes. Then, if a customer decides to revoke consent to data tracking, you immediately need to delete customer data from this third-party API. To delete the information, you can call an endpoint on the API which queues an asynchronous delete process and returns an Operation ID. You can then call another endpoint with the Operation ID as an argument to get the delete operation status (pending, complete, etc.). Customer consent to data analytics is stored in a field in DynamoDB, and it is your job, upon revocation of customer consent to data analytics, to delete all their data via this third-party API, verify deletion, store records of all processes, and send an alert via e-mail notification if any issues are thrown during this process. How would you do this?

You're probably thinking...

"What services would I use?"

"What services allow for notifications, email sending, database storage, etc.?"

Let's analyze our situation part by part.

Your customer data is saved in DynamoDB, a NoSQL database. You know that you want to track a revocation of consent to data analytics via a field in this database. Immediately, you should be thinking DynamoDB Streams, which allow all changes to DynamoDB to be sent to a Lambda function or elsewhere. Let's do that.

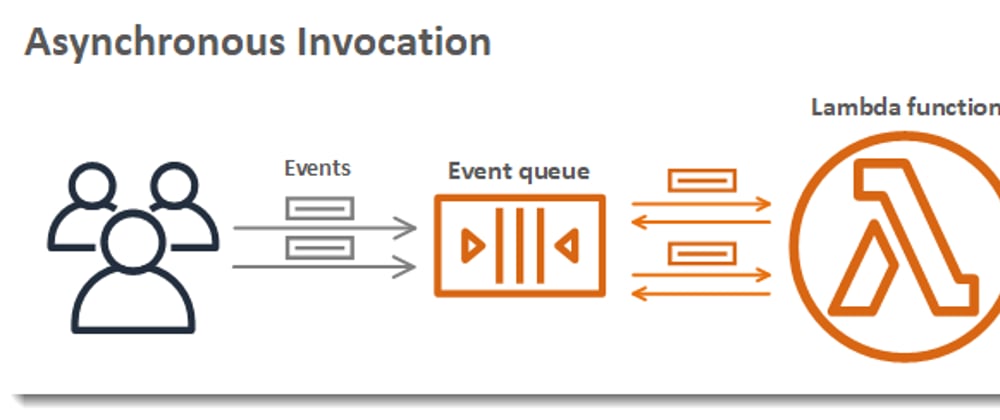

You can then have your changes be sent to a Lambda function for processing. A Lambda function can then see in detail what changes were made to your DynamoDB table and see if a customer has revoked data tracking. If so, your Lambda function can perform functionality based on that fact. Let's set up this Lambda function as a trigger to the DynamoDB stream.

Now, you can write code for this Lambda function such that if DynamoDB streams detects a customer is revoking access to data tracking, your Lambda function can call this third-party API's asynchronous data deletion command with the customer ID provided by the DynamoDB stream. The third-party API is then designed to return the Operation ID that is processing in the background. This API delete operation occurs asynchronously, so you will not know immediately when the delete operation has finished, so you will want to save the Operation ID you have returned somewhere. Then, you can later check the status of the Operation and deal with it accordingly. What data option should you choose?

Well, you have several options. The data storage options that come to mind are DynamoDB, RDS, S3, ElastiCache, Redshift, etc.

We are not doing data analytics here -- we are merely saving a few structured fields, so Redshift does not make much sense. We are not performing block-storage, as we are saving a structured schema, so S3 does not make sense. In-memory storage benefits really are not necessary here, so we will forego ElastiCache for now. We could use DynamoDB, however since we have a structured schema, a relational SQL database could take precedent over a NoSQL database in case we want to perform queries on the data later on. Let's go with RDS.

Our Lambda function can connect to RDS and save the user ID, the operation ID returned from the third-party API, as well as other helpful data such as the current time, etc. in a PendingDeletion table.

We now have asynchronous operations running via our third-party API, and we have the operation IDs saved in RDS. What now? Well, we can now architect an automated process that periodically scans all the operation IDs in this database and invoke the second third-party API endpoint to see if the asynchronous delete operations have finished.

To do this, let's create another Lambda function that queries this database, loops through all the entries in the database, and with each entry, call the second endpoint in the third-party API supplied with the operation ID to get the completion status of the asynchronous operation. If the operation was still not completed, we can update the time-based analytics fields in our PendingDeletion table we saved our entry to earlier. If however we learn that the asynchronous delete operation has completed in our third-party API, then we can move the data to the next step. We can then create a second table, CompletedDeletion, in our RDS database that the Lambda function will save the user ID and other relevant information.

So we have the operation down, and our next step is to run this Lambda function on a schedule. Thankfully, AWS provides simple architecture to make this happen.

Let's create an SNS topic with this AWS Lambda function attached as a subscriber. Then, what will happen is whenever the SNS topic is triggered, all subscribers (including our Lambda function) will be invoked to run the operation. SNS is also a good way to utilize an overall microservices architecture, so we are on good ground here.

We still want our function to run on a schedule, so where will we do that? AWS provides simple architecture to make this happen too:

AWS Cloudwatch Events. With this service, let's create a schedule that will trigger our SNS topic we created earlier on 1-hour intervals. This means, our subscribers to our SNS topic will invoke every 1 hour, including our Lambda function which performs the reviewing of our asynchronous delete operations we stored in RDS.

You might have noticed something...and asked a question, "Why would I even use SNS when CloudWatch Events can call Lambda directly?". Good question. The reason why this architecture is stronger is because now, down the road, if we ever want to invoke this function, we have an easy avenue to do so, by sending a message to the SNS topic. Additionally, we also have room to send a JSON object to our SNS topic that can be utilized by subscribers as well, without adding any unnecessary complexity to our architecture.

And there you have it. You now have an architecture that can utilize a 3rd-party API to run asynchronous operations, and you also have an architecture in place that can log if and when these asynchronous operations actually complete.

However...

There is just one problem. We do not own the third-party API. Why is this significant? Well, we will not be able to say if and when the developers of the third-party API decide to update something that will break our operation. Because of this, we need to fulfill a defensive design strategy to handle this. With this strategy, we can solve two potential issues:

1) What if we notice an asynchronous delete operation does not ever appear to complete?

2) What if we notice that the schema in our 3rd-party API changed

without prior notice or some other breaking change occurs outside of our control?

Part of our defensive design strategy is to prepare for this case beforehand so we do not get burnt later. Let's utilize the following solutions architecture, respectively:

1) If the Lambda Function notices that a deletion has not completed in three days, the function sends a message to a new SNS topic, which then invokes SES, which then sends an email containing the failing operation and the user's ID to a technical contact to investigate.

2) If the function fails due to a third-party schema change, etc., errors are logged in CloudWatch and an email notification is also sent to selected technical contacts for further investigation and review, using the same methodology as above.

Using our above tactics, we have successfully fulfilled the beginning of a defensive design strategy that will only strengthen the security and reliability of our application and ultimately benefit our customers.

And that's it! If you have any comments or any other architectural ideas, let's talk about it!

Appendix:

We had a variety of options to create this architecture. The easiest, and most conventional, is to go serverless. My decision to use a serverless architecture in lieu of creating an application and hosting it on EC2 or another server architecture saves money as code is being run only when it is actively being used. I chose RDS over a NoSQL option such as DynamoDB because we have structured data which allows us to perform data operations now or in the future as we find necessary. Lambda can also scale at will easily which allows for good performance. Routing issues to SNS topics, DLQ, etc. is good microservice design. Failure notifications to technical contacts if an error was to arise (such as the third-party API not working correctly) is good defensive programming that gives our application strong reliability. Whatever application create and no matter what clientele you serve, don't forget to put the customer first and foremost. They will not know (or even care) what your architecture is, but they will always appreciate a secure and reliable design.

The above architecture was in AWS, but it would be just as easy to implement a solution to the problem in Google Cloud Platform using Cloud SQL, Cloud Functions, Pub/Sub, Google Cloud Scheduler, and an e-mail API such as SendGrid (though this would be a bit more complex). In Azure, the above solution could be implemented using Azure SQL, Azure Functions, Azure Notification Hub, Azure Monitor, and Azure Logic Apps Service.

Top comments (0)