Amazon web services have so many services to offer it often feels like finding a needle in a haystack.

EC2 is one of the most popular offerings of AWS.

Elastic Cloud Compute is the scalable compute capacity provided by AWS. It is your hardware on the cloud which is on-demand and ready to be provisioned with a lot of options to choose from. Imagine having the power to provide an unlimited fleet of Instance with a lot of computing capacity and no upfront payment.

EC2 mainly consists of capabilities such as Renting virtual machines, storing data on virtual devices using EBS, distributing the load across machines using ELB, and scaling services using the auto-scaling groups.

Renting virtual machines:

Virtual machines are the heart of cloud computing if you ignore serverless for a moment. Nearly every application running on the cloud uses a virtual machine as their host.

AWS provides a variety of virtual machines to rent with different sizes and memory or compute capacity or based on the payment options as well. The instances sizes vary from t2.nano being the smallest to i3en.metal with various combinations of memory-optimized, compute-optimized, storage-optimized, and General purpose.

It has so many options that one can easily get confused while choosing the right instance for their type of workload. But having so many options to choose from and having categorized instances based on the type of workload they can optimally handle can also provide some help while choosing the instance type.

Pricing of virtual machines also varies from no upfront payment to reserved instances which also provides options to its customer on whether they want to a commitment or not. The pay as you go model can help some to save money if you have no idea about the workload you are going to have. While reserving instances or Host for a period of time can also save some money for customers who know their workload and can commit for a period of time to have stability. There are many more options such as spot instances that are super cheap but can be taken away if the bid goes higher.

Storing data:

Elastic Block Storage is a network drive for AWS, It acts as a raw disk for storage in EC2. It is like a detachable drive on the cloud. You can use EBS volumes and attach them to any instance and start using them instantly. In the case of instance failure, the data stored in EBS is secured and can be detached from the instance and used somewhere else.

As EBS acts as an independent entity it can be detached from one instance and can be attached to another. EBS volumes are locked to an Availability zone and have a provisioned capacity. EBS volumes come in 4 types i.e.

- GP2 (SSD)

- IO1 (SSD)

- ST1 (HDD)

- SC1 (HDD)

Instance Store also provides temporary block storage for instances. These are different from EBS as they are physically attached to the Host instance, this comes with its pros and cons.

Pros being:

- As they are physically attached to the host instance it has better I/O performance.

- Instance store can be used as a Buffer or a cache.

- The data stored in the Instance store is persisted during reboots.

Cons being:

- On stop or termination, the instance store is lost along with the data stored in it.

- You cannot resize the instance store.

- If you need to back up the data in the instance store we manually need to back it up there is no automated process. In short Instance store is a physical disk with very high ops that cannot be increased in size and has a risk of data loss if hardware fails.

EFS (Elastic File Storage)

Amazon EFS is a network file system that is managed by AWS. It provides scalable file storage. It is said to be infinitely scalable and has so many advantages over EBS and Instance Store. We can configure multiple instances to have a common file system. It also works in multiple Availability Zones, which makes it easy for instances from different Availability Zones to connect to the file system and work from the same data source.

EFS uses the NFS v4.1 protocol. It is a highly available, scalable, and Expensive service i.e 3 times more expensive from GP2 of EBS. We can use security groups to control access to EFS. We can have encryption at rest using the KMS service.

NOTE: It is only compatible with Linux based AMI and not windows.

Distributing load:

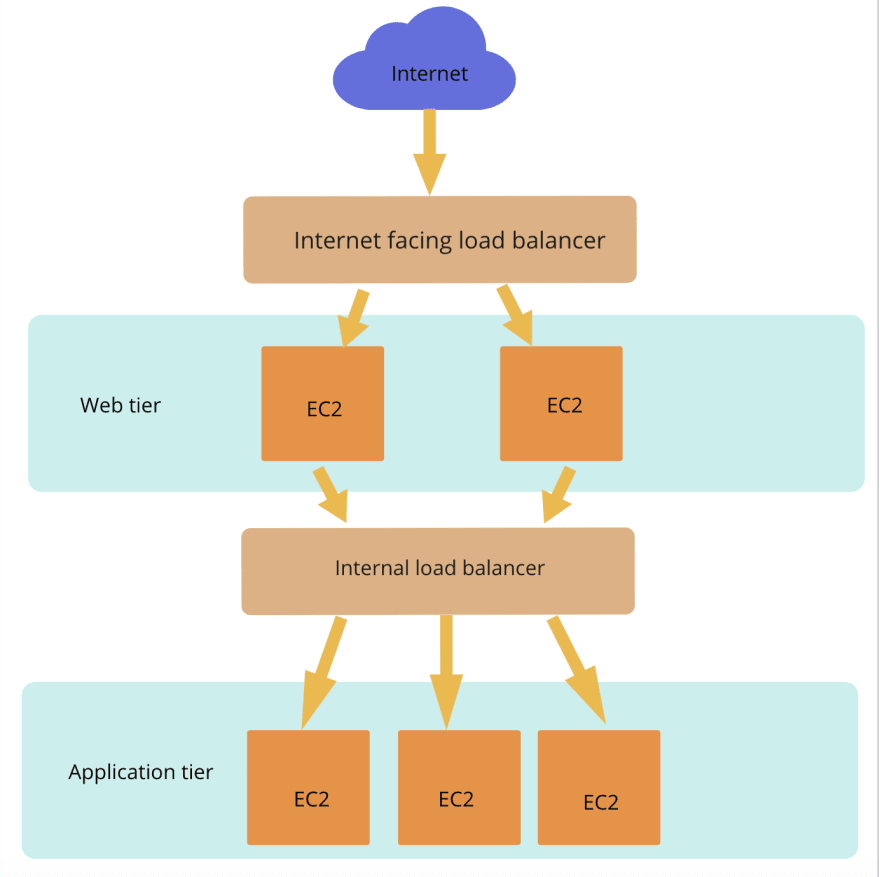

EC2 service provides a load balancer for distributing load between multiple instances in AWS. Using a load balancer helps us to manage the incoming request and balance it across a fleet of instances. This helps us ensure that our application's downtime is minimized as much as possible.

We can have multiple downstream instances to balance your load while exposing a single point of access or DNS. This helps us manage failure, Load balancer regularly health checks the instances, and if an instance fails it stops sending traffic to those instances and triggers an alarm. It also provides SSL termination.

There are three types of load balancer in AWS i.e.

- Classic load balancer (V1)

- Application load balancer (V2)

- Network load balancer (V2)

We can also set up a load balancer internally. So that we can load balance internally between the instances. for example, we can have a web tier and an application tier, the web tier sends a request to the application tier. We can set up an internal load balancer to balance requests coming from the web tier into the application tier.

We can also set up stickiness so that the request from one user only goes to a single instance for a period of time to ensure consistency.

Scaling Services:

Selecting the perfect instance for the workload is one of the most difficult tasks for a solutions architect. Even though there is a range of options to choose from, we still can't predict the correct amount of instances required to balance our workload. AWS's Auto-scaling does a commendable job in balancing the number of instances.

Just imagine your server having more workload on Wednesdays and less on Sundays, how will you commission servers to match the workload? No worries, we just need to add our instances into an Auto-Scaling group and define policies on how you want to scale your instances. If you have a predictive workload you can set up a policy saying that increases the number of instances to 5 on Wednesdays or if you have an unpredictable workload you can set up policies based on various parameters such as CPU or memory usage. Say if my instances have more than 80% of CPU utilization increase the number of instances by one.

We can also set up an scale in policy that will ensure that we don't over provision instances that are of no use. So we can set a policy that says remove instances if the CPU utilization is below 20%. In this way, you can automate the process of scaling in and out instances with no manual intervention. Auto-Scaling ensures high availability and when used along with load balancer can help us provide quality service to our customers.

Conclusion:

EC2 is one of the most popular offerings of AWS and there is much more to discuss and learn in EC2.

Top comments (0)