Load Time and Runtime are two way different terms that are used for Web-based applications.

Load Time basically consists of downloading resources, processing them, and also rendering the DOM. Runtime is the time taken by the number of costly DOM operations required to update the UI.

Both play an equal role in the optimization of web pages. We will be focusing on the LoadTime Optimization here in this article.

A fast web application is very crucial and should have the following factors:

- It should score higher on Google and SEOs.

- It must provide a better user experience.

- It must have same the load time on all the devices.

All these factors can be achieved by focusing on minute changes while developing a web application which most web developers ignore in their early stages. It can make your webpage stand out at the top in every google search.

1. Set the Cache-Control Headers

The Cache-Control HTTP header field holds directives (instructions) — in both requests and responses — that control caching in browsers and shared caches.

It helps the browser on how to cache the resources and avoid multiple requests to the server. It doesn't reduce the first load time but drastically reduces the subsequent load times for the webpage.

Webpack with decent configuration can have higher expiration times for images, CSS, and even JS files. However, index.html should have less expiration times relatively (as it has the script tag pointing to the current version of the application, and needs to be updated to deliver newer versions.)

You can also have a custom updater logic that sends a poll request to the server for a newer version and receives it when the user confirms. For e-mail services, it is a must.

Learn More How to set up the cache headers.

2. Serve Everything from a CDN

A CDN!! Yes, using a CDN (Content Delivery Network) can also help in LoadTime optimization as it serves the assets from the nearest geographical location to the user, which means higher bandwidth and lower latencies. Usually they don't cost much.

CDNs can also proxy and cache the API calls if required and usually respect the Cache-control headers by setting up varying expiration time for every resources.

For static assets, Netlify can be a good option. Cloud Providers have their CDNs too.

3. Leverging Server Side Rendering

In case of client-side applications, browser has to fetch, parse and execute all the JavaScript that generates the documents that users would see which takes some time. Server-side rendering provides a much better UE as the browser fetches a complete document and displays it before loading, processing and executing your JavaScript. It's not slow rendering DOM on the client but instead it **hydrates* the state.*

It uses a concept of React-Hydration (available in Gatsby) which provides the feature in which the static HTML is generated using React DOM and then the content is enhanced using the client-side JS via React Hydration.

It helps in significantly decreasing the FCP (First Contentful Paint) and LCP (Largest Content Paint) which is one of the main criteria of total score in PageSpeed.

Server-side rendering by itself is still relatively slow and consumes some resources. Gatsby eliminates this problem by pre-rendering the whole website into static HTML pages. However, it's not the best approach for highly dynamic websites and web apps that heavily rely on user input to render content.

Frameworks built with server-side rendering, for example, Next.js handles most of the complexity, enabling you to program the application and not boilerplate code.

In case if you decide to go with an in-house solution keep in mind that stream rendering is superior to dumping a string in the node response stream, it saves **server memory* as well as start parsing HTML asap, reducing rendering time even more*. To achieve this in React use **renderToNodeStream* instead of renderToString.

4. Optimizing SVGs (Scalable Vector Graphics)

SVGs are mostly used nowadays, they are scalable and looks good in any dimensions with a file size less than as compared to the same raster images (e.g, JPEG, PNG, etc.). However, some SVGs may contain lots of junk which is not used for rendering but are still downloaded eventually increasing the LoadTime.

Best practice is to use img tags to render SVGs but you lose the ability to style or animate them. If animation and styling is a critical case then you can use it directly.

Some tools can be used to optimize the SVGs like SVGOMG (to optimize it manually) and SVGO which is a Node.js-based tool for optimizing SVG vector graphics files.

5.Using WebP

WebP is a modern image format that provides superior lossless and lossy compression for images on the web. Using WebP, webmasters and web developers can create smaller, richer images that make the web faster. Typically pictures are 25-35% smaller in WebP as compared to their JPEG format for the same quality.

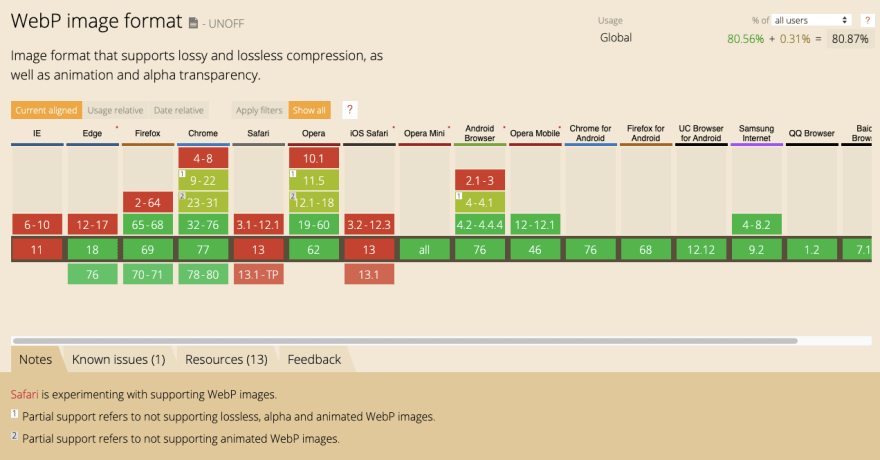

Most of the browsers renders the WebP perfectly according to Caniuse.

For conversion to WebP you can use this tool - Squoosh.

6. Picture element over Img

Most websites nowadays features responsive designs which changes the layout and styles element of the webpage, but it does not changes the assets due to which users have to wait for the huge photos intended for the desktop to load on the smaller mobile devices.

One way to fix it would be to use picture tag instead of the img tag. We can set multiple source elements for different sizes and also alternative image formats in the picture tag, and the browser will choose the most appropriate one out of it.

picture tag consists of the img tag in it, incase when it's not supported by the browser, the img works as a fallback.

While talking about images the concept of Lazy Loading can't be left out. There is no doubt that you can save some bandwidth on page load by making the images lazy-loaded. They are only loaded when they are in the viewport or near scroll position. Some techniques to apply lazy loading can be found here.

7. CSS Optimization and Avoidance of CSS in JS

Just like images, CSS can also affect the LoadTime of your webpage. It must be minified and clean in your codebase. Some tools can be used to optimize the CSS like PurifyCSS. But the best practice is to purify it manually as tools doesn't provide accurate results in this case.

CSS-in-JS provides a great experience to the developers as it keeps everything in one place. However, during server-side rendering, we get the colossal HTML and pay in performance for its rendering. The CSS is generated from the JavaScript bundles and is not even cacheable.

You can use zero-runtime CSS-in-JS libraries like - Linaria that allows you to write your CSS in JS and extract them to CSS files during the build and also has features that exist in styled-components.

8.Code Splitting Practices

JavaScript bundles majorly contributes in increasing the Loadtime of the pages. By default, webpack puts everything in one bundle meaning that the browser downloads all the assets and heavy libraries just in case even if they are just used for one component.

To fight this, Code-Splitting is the best way. You’ll have to lazy load parts of your code asynchronously and use them only after they’re ready. React offers Suspense and React.lazy to help you with that but only on client-side rendering. In case of server-side rendering both of these are not available yet.

Though, hacks built around loading the components using async import() or sync require() might work fine, but some libraries like react-loadable and Loadable Components works fine on the both client as well as server. Imported Components is also an amazing library that promises more features than other two, works with Suspense and uses React.lazy under the hood.

9. Optimizing NPM Dependencies

Nowadays, 97% of the code of the modern web applications comes from npm modules and only 3% is the developers contribution. All this code increases the bundle size and is worth optimizing.

You can use the Import Cost VS code extensions to know the size of the import right away in the editor, but for the optimizations it's better to use the webpack-bundle-analyzer library. It generates an interactive treemap visualization of all bundles with their contents and lets you see which modules are inside and what makes the most space. However, modern bundlers support quite sophisticated tree shaking that finds unreachable code and removes it from the bundle automatically.

Most modules have alternatives that do pretty much the same with smaller bundle sizes. Switching is not always worth it, especially if the library is coupled with the rest of the code. It's also worth removing duplicate components which does the same thing, like mixing Loadash and Ramda.

Large React applications often end up using React Select, which is fantastic, but it comes with an enormous bundle size and just for a Dropdown feature.

It's a better practice to use the libraries and components which does the same thing but with a less bundle size. There are hundreds of variations available for some libraries which provides the same feature for your web applications.

10. Self Hosting Fonts and Adding Link Headers

Google Fonts API enables us to use fonts into our project by just importing them like -

@import url("https://fonts.googleapis.com/css?family=font-name");

However, this approach has few performance drawbacks. But how?

Consider your browser loads a page, first it fetches the HTML page, sees a link to the CSS file and downloads it too. It would look around for the import from the other domain in CSS, so it establishes a connection, fetch the CSS for the respective font, then finally load the font files.

When self-hosting the fonts, the browser would find @font-face directly in the application’s CSS and would only have to download the font files using the same connection to your server or CDN. It’s much quicker and eliminates one fetch.

Installing npm packages for fonts is more convenient. Fontsource offers various packages of popular fonts available on the internet.

What if, for some cases you end up using the Google Fonts API, you can use it by taking care of the speed, by adding Link Headers.

<link rel="preconnect" href="https://fonts.gstatic.com/" crossorigin>

It will instruct modern browsers to immediately connect the specified domain before finding the font’s @import, so it’s ready for fetching assets sooner. Fortunately, there is another variation available for the link element - Link HTTP Header. It’s much faster and can be used even to prefetch or preload critical resources from the same domain but it requires some control over the webserver.

Conclusion

Optimizing a web application to reduce the LoadTime can be a quite challenging task!!

It's easy to handle minor tweaks like loading third-party scripts asynchronously, self-hosting fonts , adding link headers, etc. But critical decisions should be taken at the beginning of project like server-side rendering, code-splitting, using CSS-in-JS as changing it later requires a lot of regressions and is also a tedious task to perform.

You can apply the listed measures to optimize load speed of your web applications and have a comparison between them before and after optimizing it using these tools Google Pagespeed and Experte PageSpeed. They provide a detailed explanation regarding the LoadTime of the web applications.

This was enough regarding Optimization of LoadTime for React Apps from my side, till then keep developing 😎

You can reach out to me on Twitter- Ankit Singh. Thanks for reading the article 🙌

Top comments (0)