At Adyen, we use PostgreSQL as our preferred database and have been running (for many years) one of the largest implementations in the world. In this blogpost, our Database Architects detail how they have been able to mitigate one of its biggest technical challenges - write amplification.

Short introduction to PostgreSQL write amplification

Write amplification may be the biggest downfall of PostgreSQL. When you update a row, it has to update all the affected indexes. Uber made this famous in a blog post where they migrated off PostgreSQL because of it. We don’t want to add anything to the Uber discussion, but focus on how PostgreSQL tries to limit the disadvantageous write amplification effects.

PostgreSQL write amplification is caused by a combination of multiple design choices:

- All rows in PostgreSQL are immutable

- Indexes directly point to the heap page containing the data

- Multiversion concurrency control (MVCC) implementation details

Write amplification occurs when a row is updated and at least one of the indexed columns is part of the updated columns. In this case PostgreSQL needs to update all indexes to point to the latest version of the row. When we have a table with ten indexes this means PostgreSQL has to update the table and all 10 indexes on this table. This phenomenon is called write amplification.

The rest of this blog will explain in detail how updates are handled at block level and how PostgreSQL uses HOT updates to prevent write amplification.

Updates in general

Before diving into the cool stuff, let's take a look at the lifecycle of rows within PostgreSQL. What happens when we update a row in PostgreSQL? Assume we have a table with only one column and this column is the primary key. Next we update a row in this table and PostgreSQL performs the following steps:

- Since rows are immutable, a new version of this row has to be created

- All indexes on the table must be updated to reference the new version of the row …and after some time

- Removes the dead version of the row

- Marks the old line pointer as dead …and during vacuum

- Removes the dead references from all indexes

- Marks the old line pointer as unused

Single page cleanup

Before we can continue we need to explain one more thing: Single Page Cleanups. It might not be the best documented part of PostgreSQL, but it is an essential aspect of understanding the efficiency of HOT updates.

A single page cleanup occurs every time you write to a page (i.e. insert, update or delete). The single page cleanup does the same work the vacuum process does, but stays within the boundaries of the page. In our case this means old versions of rows can be removed and line pointers will be marked as dead or unused. Since the Free Space Map (FSM) and indexes are not within the page, they are excluded from the single page cleanup operation.

Also, Single Page Cleanups do not generate any additional Write Ahead Log (WAL) and so again are much cheaper/faster than the notoriously expensive PostgreSQL vacuum operation.

See video "Ordinary update": Video 1

HOT updates

Write amplification is a common problem in PostgreSQL but we can prevent this phenomenon through Heap Only Tuple updates or HOT updates in short.

The acronym describes what happens in the case of a HOT update: only the heap gets updated while none of the indexes is affected, hence no write amplification!

Let’s take a look at what happens at block level in the case of a HOT update. For this example we use a table with multiple columns, a primary key on the first column and no indexes on the other columns. We update columns of this table, but not the primary key column. The life cycle for updated rows in this process is a little different:

- Create a new version of the row, including a new line pointer

- Remove the old version of the row as part of vacuum or single page cleanup

- Redirect the original line pointer to the new line pointer

The final part, the redirection of the line pointer, is the crucial detail here. Since indexes point to the line pointer on a page and not the actual row data the index still points to a valid row. The only difference is that there is an additional redirection to one or more line pointers to finally find the correct version of the row.

See video "Hot update": Video 2

Imagine a table with 10 indexes on it. In the case of an ordinary update, PostgreSQL would write to at least 11 different pages: one page in the data heap and one page on every index. But in the case of a HOT update, PostgreSQL only writes to a single page. This saves 10 page writes.

In this example, we update columns without an index on it. When you think about it, this must make sense; one crucial part for HOT updates is that the index should not change. But when we update an indexed column, the index must change. We can no longer use the old line pointer, as it would point to a row with a different value than the index.

HOT update limitations

A HOT update is only possible when:

- No indexed columns are updated, including columns in partial indexes

- The new version of the row must be written to the same heap page as the original version of the row

Since HOT updates are so much more efficient than ordinary updates it goes without saying that we should prefer HOT updates over ordinary updates. Let’s then take a closer look at the two boundary conditions for HOT updates.

If we update columns which are part of an index, we lose the ability for a HOT update. For this reason we should consider leaving frequently updated columns out of the index whenever possible. One can also consider denormalization of data in certain cases or even consider changing application logic.

The second boundary condition we have to meet for HOT updates is that the new version of the row has to be on the same pages as the original one. We can stimulate this by changing the fillfactor of a table.

Fillfactor

According to the postgresql documentation the “fillfactor for a table is a percentage between 10 and 100. 100 (complete packing) is the default. When a smaller fillfactor is specified, INSERT operations pack table pages only to the indicated percentage; the remaining space on each page is reserved for updating rows on that page”.

The default fillfactor for tables is 100%; we write as much data to a table as we can. As soon as the latest page is full, PostgreSQL creates a new page and continues writing on the new page. For inserts and read operations this is obviously the most efficient way to store the data. But when a page is filled up to 100% of its capacity it is no longer possible to perform HOT updates.

100% fillfactor

Let’s assume we have a table with 100% filled pages and now we do an update. PostgreSQL will perform the following steps:

- Create a new page

- Write the new version of the row to this new page

- Update all indexes to point to the new version of the row …and later in time:

- Single page cleanup (or vacuum) can remove the old version of the row on the original page and mark the line pointer as dead

- The vacuum process has to remove all index entries pointing to the dead row

- Mark the dead line pointer as ‘unused’

See video "100% fillfactor" Video 3

From now on there is a vacancy on the first page and if we update another row on the first page we can do one HOT update; there is one free spot left behind by the previous update. This process makes HOT updates possible, though PostgreSQL originally writes pages up to 100% full.

By setting the fillfactor to a lower value we instruct PostgreSQL to stop inserting data into a page when the page is filled up to the given percentage. When this percentage has been reached the writing continues on a new page. The empty space is kept in reserve for further (hopefully HOT) updates.

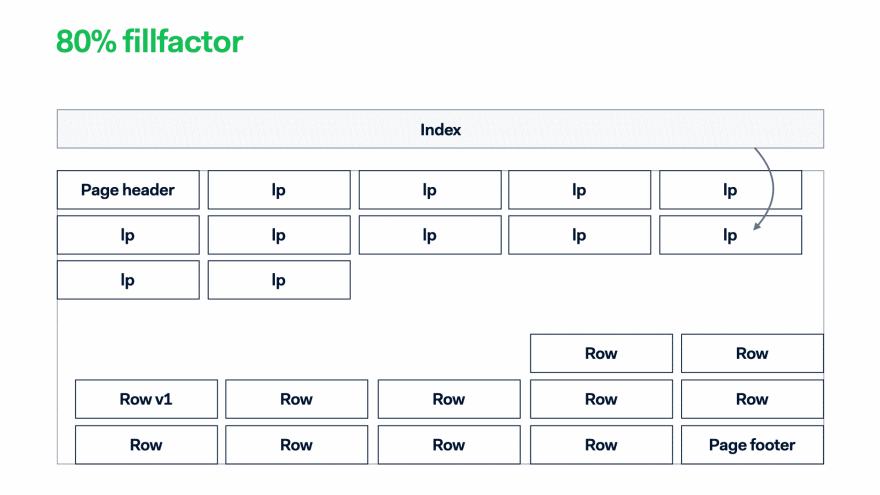

80% fillfactor

When we update a row on a partially filled page there might be enough free space to accommodate a HOT update. PostgreSQL will perform the following steps:

- Write the new version of the row to the same page

- Add redirection …and later in time:

- Remove old version of the row

- Add a line pointer redirection to the latest line pointer Mark the old line pointer as unused

See video "80% fillfactor" Video 4

Note that during a HOT update there is never a dead line pointer (dead row). This is important since dead line pointers cause less efficient index reads. We use the index to locate a row, but when we retrieve the data page containing the row the line pointer appears to be dead.

Savings by tuning the fillfactor

At the start of February (2022) we set the fillfactor to 85% for a newly created monthly partition for one of our tables. During the month we use this partition in some process and all work for the month happens in this partition. Most of the inserts happen at the start of the month and the amount of updates is roughly nine times the amount of inserts.

At the end of February we compared metrics between January, where we were using a partition with a 100% fillfactor, and February where the fillfactor was set to 85%. The workload in February is almost identical but slightly higher than in January.

The most important differences between the two months are listed in the table below.

Usually our WAL footprint grows every month, but in February we suddenly had a 10% decrease in WAL.

We don’t have (yet) the numbers to describe the improvements on the vacuum operations, but the following two pictures are self-explanatory. Before setting the fillfactor the number of dead rows regularly grows towards (and over) 60M while vacuum is dramatically reducing the number of dead rows at every run.

After setting the fillfactor to 85% the number of dead rows remains below 10M for a long period of time and the vacuum operation is removing much less dead rows per run. Something really interesting happens on February 6th, but that is a topic for a next blog.

Conclusions

HOT updates are an efficient way to prevent write amplification within PostgreSQL. HOT updates have their limitations and write amplification might remain a significant issue for workloads with high amounts of updates on indexed columns.

On the positive side, we can argue that lowering the fillfactor has a very positive effect when it can increase the percentage of HOT updates. When we can increase the proportion of HOT Updates we see several benefits.

A lower fillfactor can stimulate more HOT updates i.e. less write operations. Since we write less we also generate less WAL writes. In our case we saved 10% of overall WAL only by reducing the fillfactor on two tables. Another advantageous effect of HOT updates is that they also ease the maintenance tasks on the table. After performing a HOT update, the old and new versions of the row are on the same page. This makes the single page cleanup more efficient and the vacuum operation has less work to do.

HOT updates help to limit table bloat, which might sound contradictory at first glance; we are actually adding bloat to the table to start with. But this original bloat helps us to prevent future bloat. When we update this table with 15% original bloat, the updates are performed within the available empty space in the data pages while otherwise they would have been written to the end of the table, causing the table to grow and generating bloat in the middle of the table. Our table grew faster at the beginning of the month, but the total table size was almost 20% smaller at the end of the month.

Finally the HOT updates limit index bloat. Since HOT updates do not update the index at all, we don’t add any bloat in the indexes. When we combine this reduced index bloat with less table bloat and a smaller table size we also end up with increased read efficiency.

Look out for our next blogpost: we will highlight how we have been able to track HOT updates and tune fillfactor using Prometheus and Grafana.

References

Fillfactor and PostgreSQL dark corners (Internal talk at Adyen)

Top comments (0)