If you know dbt, then this post is to help you learn the core concepts of Terraform using the same mental model you use for dbt. You'll see where they're similar and how you can apply the paradigms you use in dbt to Terraform!

Why? Because making connections between what you're learning and something you already know is an effective way to wrap your head around that new topic.

If you know neither, some of the comparisons might seem confusing, but that's okay! You'll hopefully get an understanding of the patterns around both that will make learning either exponentially easier.

Introduction

In the past five years, two libraries have changed the game in their respective spaces: Terraform for cloud infrastructure and dbt for data transformations.

This post is to give you a basic understanding of what Terraform does and how multiple patterns we love about dbt also transfer to Terraform.

My personal goal is to get dbt users who don't know Terraform excited about it. I want you (if this is you) to get curious about how this can help you in your work and day-to-day life.

I'll have another blog post out soon about where Terraform fits within the current status of the data industry, but this isn't that post. Consider this the prologue. This is to get you excited and wanting to know how it will improve your lives.

Terraform

Terraform is a tool made by the brilliant minds at HashiCorp to solve problems in the new space of cloud computing cloud infrastructure. It popularized the concept of infrastructure-as-code.

I remember when I started using AWS, I looked at my team and asked them:

Wait. You just... click the buttons and you get a computer running virtually for $50,000 a month? And everyone else can change that computer, too? And if you want to prevent other people from messing with your things, you need to figure out IAM policies and roles? And you're okay with all of this?

After multiple frustrations with bad inputs, typos, and copy+pasting values, I ran. Fast. Away from cloud infrastructure. It felt like a swiss army knife made of flamethrowers. To be precise, cloud infrastructure are any servers, databases, or other tools from cloud service providers (CSPs) that allow you to create. Examples of CSPs are companies such Amazon Web Services (AWS), Google Cloud Platform (GCP), or Azure (sorry, no acronym here).

Source: a historical image of me running away from CSPs

Source: a historical image of me running away from CSPs

When Terraform came around, I became excited about infrastructure again. This was a way to manage infrastructure in text. The same, sweet version-controlled text we stored all of our code in on git.

Terraform uses libraries called providers and allows people to make resources. Resources are the objects we previously made that required multiple button clicks and forms to fill out, such as virtual machines or private networks. In what used to take multiple clicks and forms, I was now able to spin up AWS EC2 virtual machine in a few lines of Terraform and running a terraform apply command.

The big magic of it all? Terraform knew if anything changed and would adjust accordingly. If anyone on the team wanted to change the name of something, or if I add it to a new IP allowlist, this would get put into git and run by CI/CD after a pull request gets merged. All stress, worry, and concerns were gone. So I could focus on just building cool stuff.

Terraform Providers for dbt Users

If you use dbt, you likely follow an ELT pattern, use a cloud data warehouse, and have a certain set of tools in your data stack. Conventionally, Terraform is used to manage cloud resources from CSPs.

However, Terraform is not restricted to cloud resources. It can also be used to programmatically configure and manage the tools you use daily. There are Terraform providers for plenty of services and tools:

You can configure Snowflake/BigQuery access, Fivetran connectors (and sync schedules!), GitHub repository access, and dbt Cloud jobs. All this is performed and managed in a code-based, CI/CD-run, and auditable way!

Similarities

The rest of this post goes into software engineering concepts that are consistent between both dbt and Terraform:

- Git (Version Control)

- Declarative

- Statefulness

- Separated Environments

- Managed Services + Cloud Versions

Git (Version Control)

Both tools are version controlled. For the folks using dbt who used to write SQL, save them as stored procedures on the company's database, and find out weeks later that someone changed it afterward and didn't tell you, version control keeps people happy, less stressed out, and not holding animosity toward their teammates.

Just like how dbt made it easy to store SQL in git, Terraform does the same with configurations for infrastructure.

By being able to push up the changes to git because they're just text files, a whole new world of possibilities opens up. In pull requests, dbt and its ecosystem made it easy to have continuous integration (CI) on dbt to test the changes and impact of the models being changed. With CI, Terraform made it easy to run tests to make sure that the configurations that were being proposed made sense, were valid, and were cost-effective.

Declarative

This is less an aspect of dbt and more about SQL. For those unfamiliar, SQL is known as a declarative language. The tl;dr is that declarative languages are programming languages where you tell the program what you want the output to be and it will figure out the best way to get you that answer.

When you run a select query, the database finds the fastest way to get the data you asked for. Most end users do not need to care about what is in memory versus storage, how the data is partitioned, etc. The user just gets the result of the query.

Terraform is also declarative. You tell Terraform what you want to build, and it makes it for you. It will figure out what API endpoints to hit, what configurations to set, where it's deployed, et al. for you. As the end user, you don't have to deal with figuring out how it's done (most of the time). You hit enter and then reap the benefits.

Statefulness

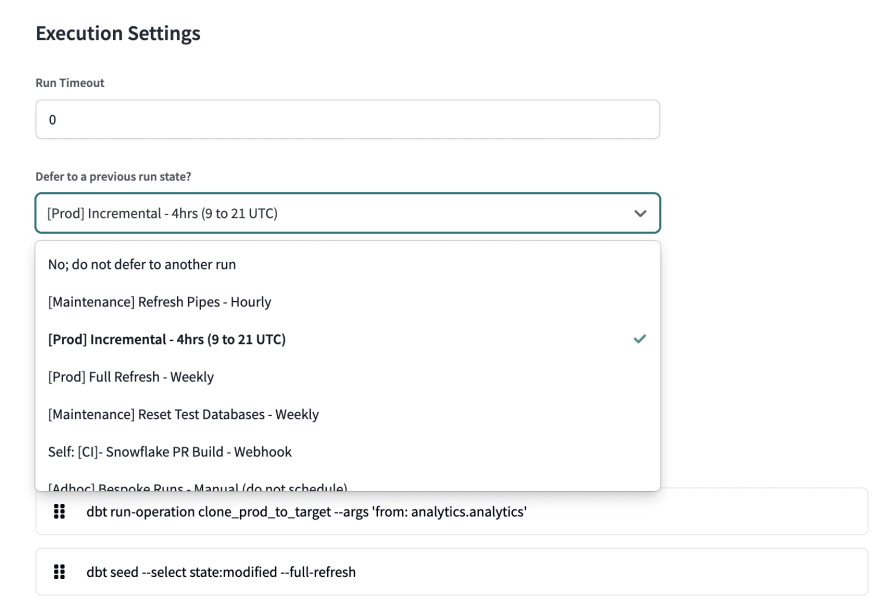

If you've ever meandered your way into your /target directory in your dbt project, you might have noticed a file called manifest.json. This file is a picture in time of the metadata that makes up your dbt project. When you use SlimCI, --defer, or the state:modified selection method, it uses an existing manifest.json to find the difference between what you've changed and what that was in that previous manifest.json.

The most commonly used manifest.json comes from your most recent production build. By using this, you can run or test only your changed models, such as where you added a column or test.

Source: https://docs.getdbt.com/docs/deploy/cloud-ci-job

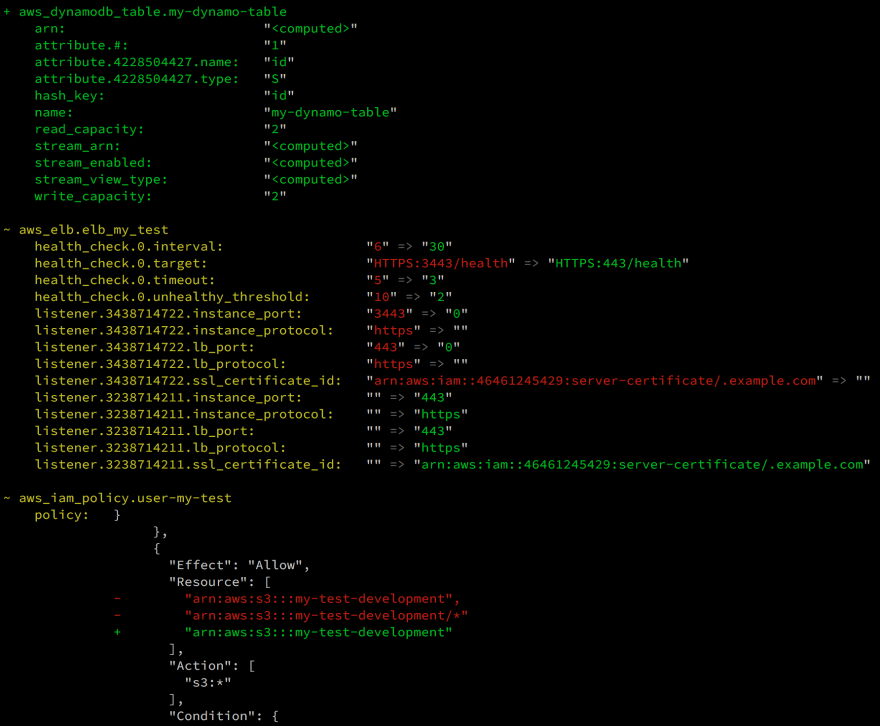

Terraform has a similar file called its terraform.tfstate. This file is a complete representation of everything Terraform has built for you based on your Terraform files.

The true magic of Terraform is this terraform.tfstate. When running Terraform, it compares your current Terraform code to what the terraform.tfstate file says exists. Afterward, it executes tasks (creating, updating, deleting) for only the resources that either were changed or didn't exist. For example, if we were to change a property (ex. name), Terraform would update the resource without replacing it, if possible. However, if we didn't change anything, Terraform would do nothing because there are no changes to make. If we deleted the code, Terraform would tear down and destroy the respective resources made from that code. It will also tell you if any other resources are dependent on the resource you're deleting (similar to dbt's ref macro!).

Source: https://stackoverflow.com/questions/43532785/can-terraform-plan-show-me-a-json-diff-for-a-changed-resource

Source: https://stackoverflow.com/questions/43532785/can-terraform-plan-show-me-a-json-diff-for-a-changed-resource

This magic is called statefulness. Statefulness is the property in which a program knows what it is (or what it used to be) and can act on that knowledge. In the case of dbt, it is only running what changed. In the case of Terraform, it is only creating resources that did not already exist.

Quick Bit: Similar Commands

dbt compile makes a manifest.json without running your dbt project.

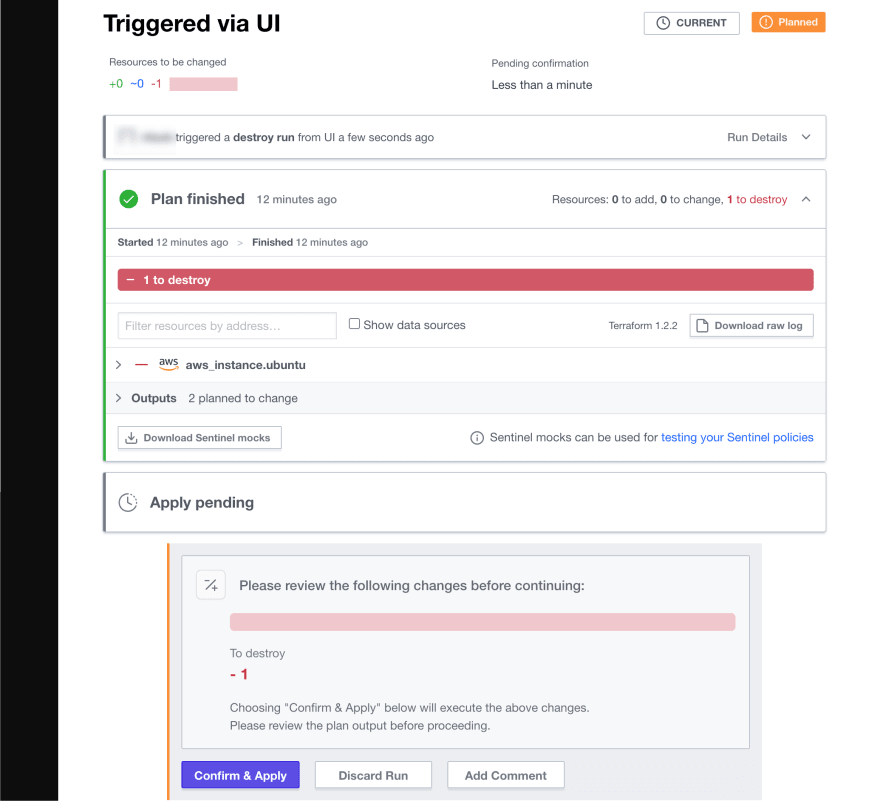

terraform plan makes a tfplan.state file, which lists the proposed changes that your Terraform project would cause.

dbt run makes the manifest.json file and creates the dbt models.

terraform apply makes the tfplan.state file, tells you what changes it would perform and executes them.

Separated Environments

One of the software engineering conventions that dbt made accessible to people working with SQL was the concept of a developer environment. A development environment is a self-contained place where individuals can iterate, tinker, and do their work. This means that people could work without potentially breaking the production environment used by their stakeholders. Everyone has their own environment and can work on their tasks in parallel without stepping on each other's toes.

Terraform can also use developer environments to provide resources before building in production. Development and staging environments are significant in the Terraform workflow because it is a risk-free opportunity to spin up resources and test whether the functionality works as intended.

Managed Services + Cloud Versions

In today's day and age, everything has a managed service or cloud-hosted option. Terraform and dbt are no exceptions.

For the dbt users, do you feel excited about Terraform? How about the Terraform users? A powerful part of both tools is that taking these frameworks to production is really easy through their cloud offerings. You can sign up for Terraform Cloud or dbt Cloud to make the core version of the products even more feature-rich and easier to use.

In dbt Cloud, you can run dbt on schedules and set up continuous integration (CI) quickly with the press of a few buttons.

Source: https://docs.getdbt.com/docs/deploy/cloud-ci-job

Source: https://docs.getdbt.com/docs/deploy/cloud-ci-job

In Terraform Cloud, you are provided an easy way to manage the terraform.tfstate file, format and test your Terraform changes in CI, and run Terraform as needed.

The best part? The most basic tiers for both services are free. You can sign up for either of them right now and start driving value and robustness in your organization today.

Conclusion / Call to Action

Excitement. I wrote about this feeling at the top of this post. I hope you feel it now. If you previously felt fear over how overwhelming Terraform could be, I hope this calmed that fear and replaced it with excitement. I want this to be the beginning of you being as comfortable with Terraform as you are with dbt.

If you want to get started with Terraform today and aren't familiar with CSPs, a fantastic starting point is trying to use Terraform to manage SaaSes and cloud-hosted services. In the "Terraform Providers for dbt Users" section, I mentioned that many of the tools you use have Terraform providers.

I believe that the best way to learn Terraform is in the context of something you already know. That was the inspiration for this post. To understand the concepts of Terraform using the same patterns and concepts you know from dbt.

The next step is to apply those concepts. You can make your next Snowpipe with Terraform. Manage your new Fivetran connector. Make a new GitHub repository. Order yourself a pizza.

Keep an eye out for my next post about why these skills you're applying are vital for moving the data industry forward.

Top comments (0)