I am currently a Geek Squad Repair Agent. My job is to repair computers the general public can check in with us for a wide variety of issues. Until the pandemic, that is. Once COVID19 hit Kansas City, Geek Squad shut down for a couple months. With no repairs to work on, I wanted to focus on something I was already pursuing in my free time; AWS certifications and experience. I was referred to the Cloud Resume Challenge by Chris Nagy (an extremely helpful guy) on /r/AWSCertifications.

The steps of the resume challenge were as follows:

- Obtain at least the AWS Cloud Practitioner certification

- Write a resume in HTML

- Style that resume with CSS

- Host that resume as a static website on Amazon S3

- Route all website traffic through HTTPS

- Point a custom DNS name to the website

- Use JavaScript to get a visitor counter on the site

- Store that visitor counter value in a database on AWS

- Use an API to communicate with the database from the site

- Use Python to help communication between the database and API

- Include tests in the Python code

- Deploy the back-end of the website using Infrastructure-As-Code

- Use Source Control

- Set up a CI/CD pipeline for the back-end

- Set up a CI/CD pipeline for the front-end

At this point, I was already building my own resume website, and thought this challenge could be an easy "side quest" on my overall journey. Spoilers: It was the end-game boss.

Fortunately, I already had the fundamentals out of the way. I had just passed the AWS Solutions Architect - Associate and the Cloud Practitioner. I had also already built a basic resume website I was hosting as a static site on an S3 bucket under a custom domain name. That was five steps down right off the bat. I was ready to turn in my clip-on tie.

Then came the uncharted territory. The first step wasn't too complex; I simply set up a CloudFront distributiodn that re-routed all HTTP traffic to HTTPS. After a couple hours of reading my dad's JavaScript books, I had a basic visitor counter functional.

Next up was the front-end pipeline and source control. I have used source control before in some personal Unreal Engine projects; that step was easy. After a bit of research on GitHub Actions, I successfully wrote a workflow that could automatically sync my repository from GitHub to my live S3 bucket, and invalidate my CloudFront cache.

Then came the real curve of the challenge. Setting up a serverless back-end. I had to learn SAM, which meant learning CloudFormation, which meant learning the basics of YAML formatting, as well as Python and incorporating testing in Python. I decided to get a functioning Lambda script and DynamoDB database first, and set up a SAM template after. This may sound redundant to do, but it really helped me understand how these resources talked to each other by deploying them manually first.

Once I had an API talking to a Lambda script talking to a DynamoDB table, and vice versa, I was ready to set up SAM. SAM is actually an incredibly helpful tool, and saves an incredible amount of time and thought in provisioning resources. Chris Nagy has a fantastic blog on getting started with same you can read here.

Once I had a functioning SAM template, Python testing was up next. I found the concept somewhat confusing at first, but after discovering the moto library for Python AWS testing, it became clear on its benefits. Moto allows you to provision fake AWS resources to avoid time and cost in provisioning real resources.

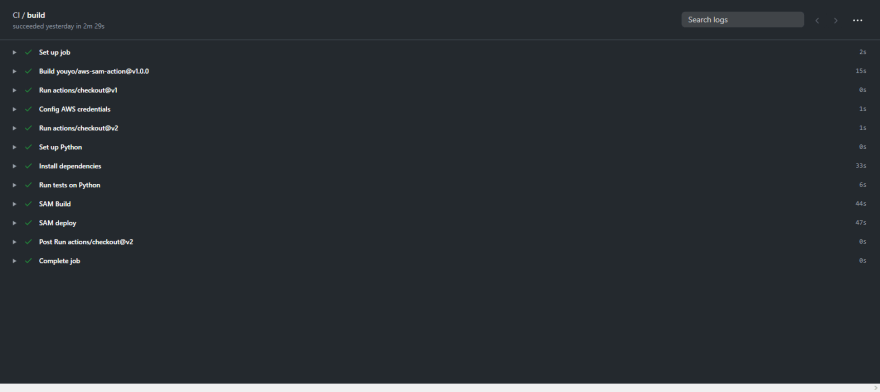

I implemented the SAM and Moto testing in my back-end CI/CD. Anytime I push a change to my back-end scripts, they will get tested and be pushed to live if the tests pass. Designing a GitHub action was fairly straight forward, thanks to the tools of the GitHub community. Those green check marks are quite possibly the most rewarding things I have ever seen.

All in all, the resume challenge was much more of a challenge than I originally anticipated, and I loved it. Thanks so much to Forrest, this challenge instilled more confidence and passion for AWS in myself than anything else has. The final product can be found here, and a link to the challenge here.

Top comments (0)