In the first post — AWS: Web Application Firewall overview, configuration, and its monitoring — we spoke about its main components, created a WebACL and Rules for it, and did basic monitoring.

Also, we’ve configured WebACL’s logs collection with AWS Kinesis, but now it’s time to see them Logz.io, as CloudWatch Logs isn’t available for it yet.

So, in this post, we will configure logs sending to an AWS S3 bucket via AWS Kinesis, and then will configure Logz.io to grab those logs from S3 and will speak about logs content and how they can be used for debugging.

Contents

- AWS WAF logs

- AWS Kinesis

- WAF ACL logging

- Logz.io S3 Bucket

- Logz.io S3 configuration

- IAM policy

- IAM role

- WAF logs — fields

- Redacted fields — excluding data from logs

- Logz.io alerting

AWS WAF logs

Besides of CloudWatch metrics, we can enable logs for all requests passed via our WebACL.

AWS WAF uses AWS Kinesis to send data and AWS S3 to store logs.

Unfortunately, WAF and Kinesis can not use CloudWatch Logs (at least, at the moment of wringing, as AWS Tech Support told me that it is planned to enable them).

So, let’s do the following:

- configure a Kinesis Firehouse stream

- configure a WebACL to send data to that stream

- Kinesis stream will send data to an AWS S3 bucket

- and Logz.io will use its connector to get logs from this S3

AWS Kinesis

Note: One AWS WAF log is equivalent to one Kinesis Data Firehose record. If you typically receive 10,000 requests per second, set a 10,000 record per second limit in Kinesis Data Firehose to enable full logging.

Go to the AWS Kinesis, create a new Data Firehose delivery stream, remember that its name must be prefixed as ‘aws-waf-logs-’.

Choose Direct PUT or other sources, click on the Next:

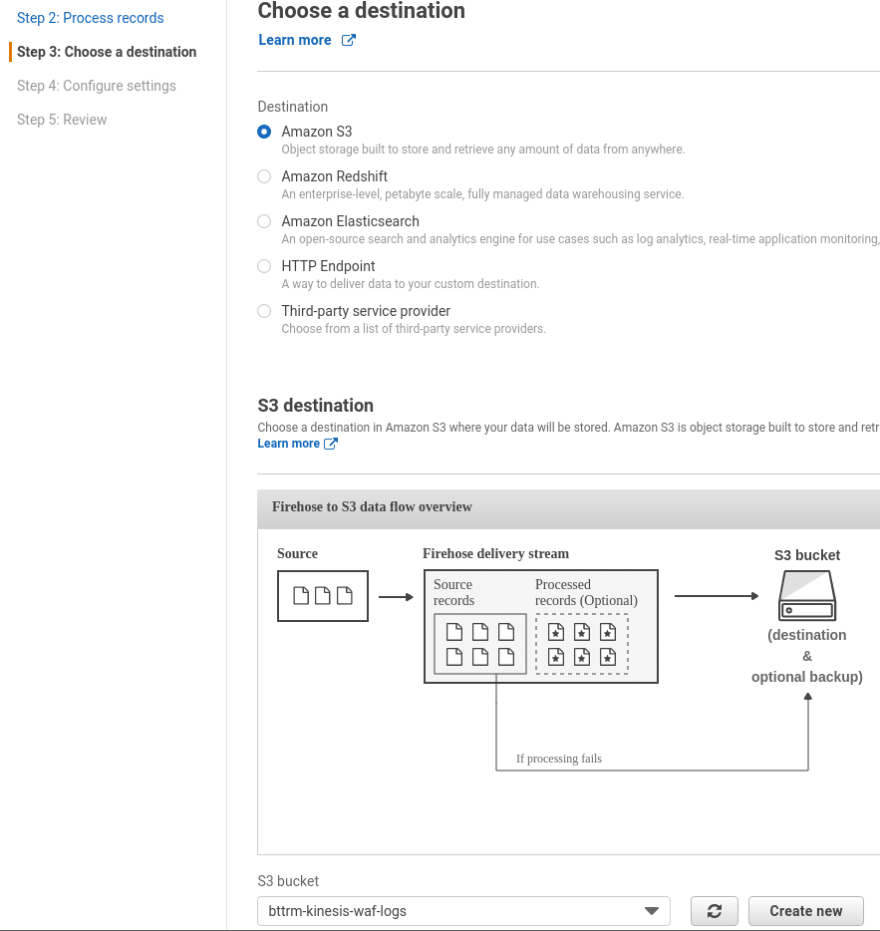

On the next page skip data transformation, click Next, then for the Destination select S3, and create a new S3 bucket:

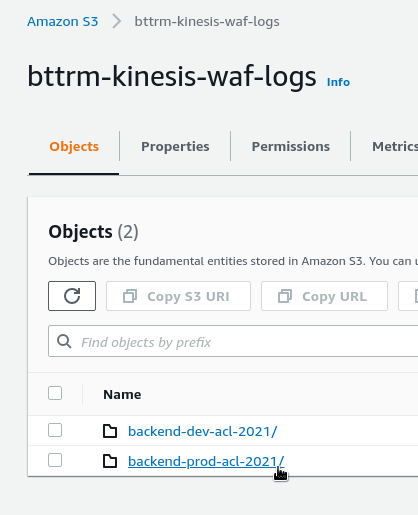

Here, I’m using an already existing bucket. To avoid mixing logs from various ACLs — add a prefix:

On the next page leave all default values. If anything will go wrong, Kinesis will send a message via CloudWatch:

To have those messages, leave the Error logging option enabled:

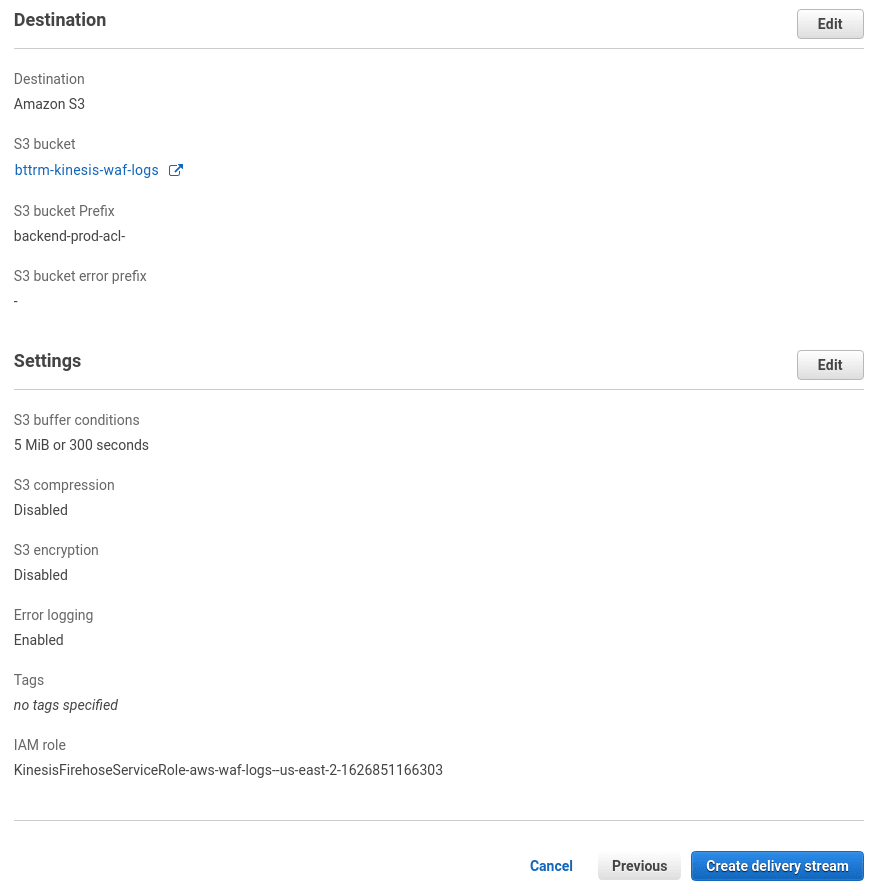

Check the settings, and create the stream:

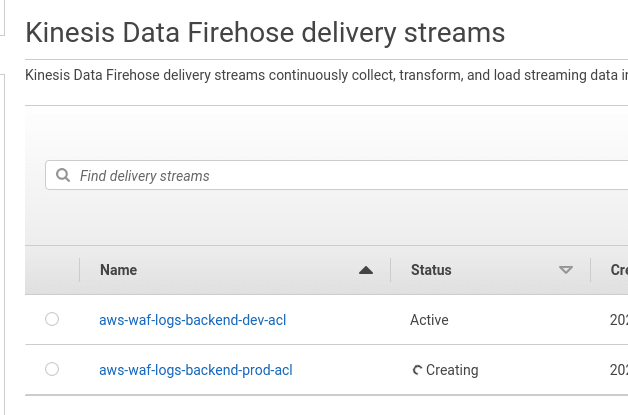

Wait a couple of minutes for the stream to be created:

Or just go to a WebACL.

WAF ACL logging

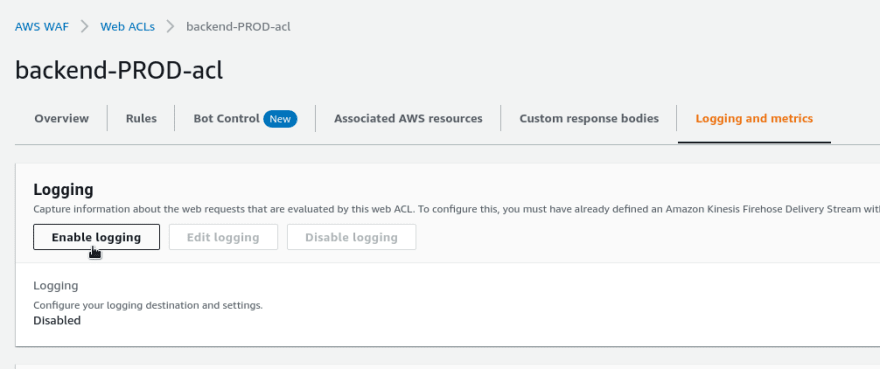

Go to the ACL, on the Logging and metrics tab click on the Enable logging:

Choose the stream created above:

Here we also can enable filters, which will speak about them below.

Logz.io S3 Bucket

Documentation is here>>>.

At first, we need to create an IAM policy and a Role, that will provide access to the S3 bucket with logs. Check its documentation here>>>.

Logz.io will do AssumeRole via this role to get permissions to access the bucket.

Logz.io S3 configuration

To to the S3 Bucket, click on the Add a bucket:

Choose Authenticate with a role:

Specify the bucket’s name, click on the Get the role policy:

IAM policy

Copy the policy content, go to the AWS IAM, create a new policy:

Add JSON from the Logz.io:

Save the policy:

IAM role

Go to the в AWS IAM Roles, create a new role.

Choose Another AWS account, specify the Account ID and External ID from the Logz.io page:

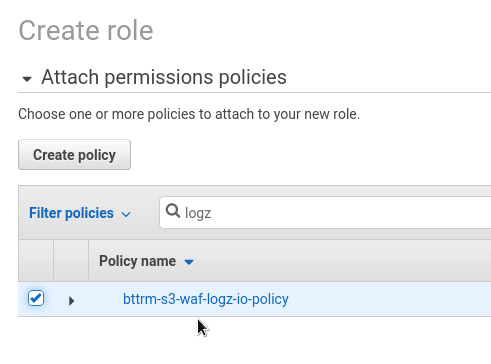

On the Permissions page, attach the policy that we’ve created above:

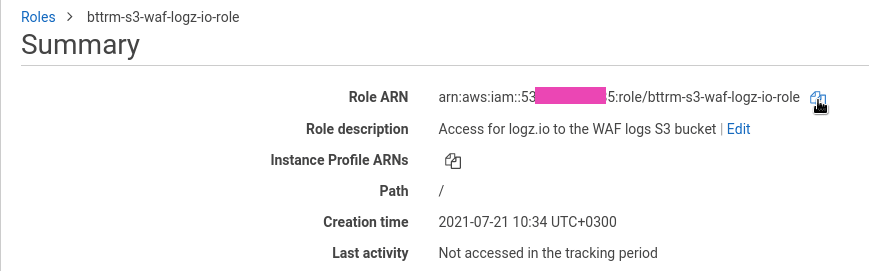

Save the role:

In its Trusted entities we can see the Account ID, that will be able to Assume that Role.

Copy role’s ARN:

Go back to Logz.io, set the ARN, and choose an AWS Region.

In the data type select other:

And set its value as awswaf (see all types here>>>), so ELK will be able to parse logs JSON:

Click Save, and the bucket was connected to your Logz.io account.

WAF logs — fields

Now, need to attach an ALB for the WebACL and wait for traffic to see something in the ACL’s logs.

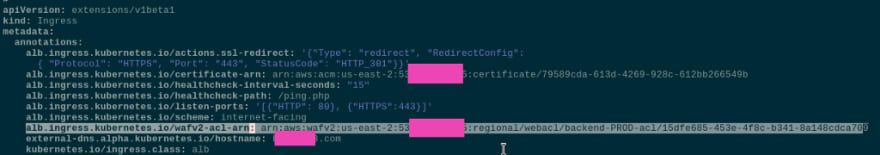

Attach the ACL to an Ingress — alb.ingress.kubernetes.io/wafv2-acl-arn:

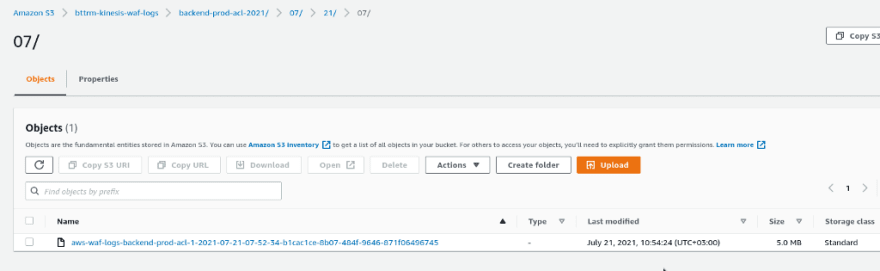

In a couple of minutes, check the S3 — a new directory with our prefix will be created with a year, which was added by Kinesis:

And the first log file:

Again, wait a couple of minutes so that the log will be delivered to Kibana.

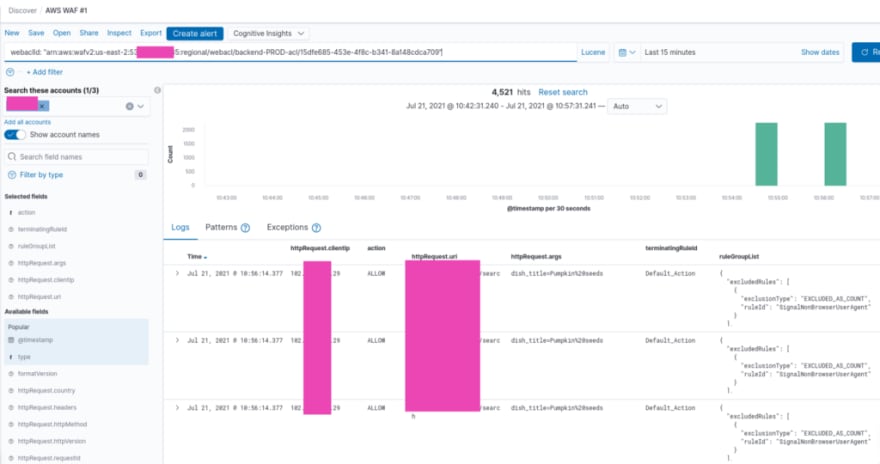

ELK will parse its fields, and we can use them during searches.

There are two mainly used fields: type: "awsfaw", to watch all logs, or webaclId to select only a specific WebACL's log:

Also, worth using the following fields in the results table (see all available in the documentation>>>):

-

httpRequest.clientIp: an IP from where a request originated -

httpRequest.uri: URI used in a request -

httpRequest.args: its arguments -

terminatingRuleId: a WebACL's Rule, that was the final one with ALLOW or BLOCK -

ruleGroupList: all rules of a WebACL, that were inspected for a request

Let’s see what exactly we can find in a log message.

At first, let’s check a request, that was allowed to access our application, i.e. with the ALLOW action:

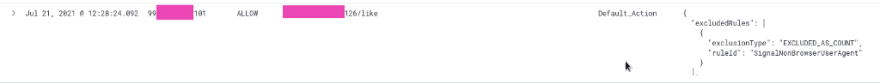

Here is a request that came from IP 99...101 to the URI /***/126/like, and it was inspected by rules specified in the ruleGroupList chain, and finally it was allowed with the Default_Action of the WebACL.

Also, in the ruleGroupList we can see that one rule was applied to the request - SignalNonBrowserUserAgent, but as we have that rule set to the COUNT action, then it was ignored:

And finally in the terminatingRuleId вwe see that the Default_Action was applied because of SignalNonBrowserUserAgent was excluded due to the COUNT action.

If a request was blocked, then in the Default_Action we will see a rule that was applied with the BLOCK action:

Redacted fields — excluding data from logs

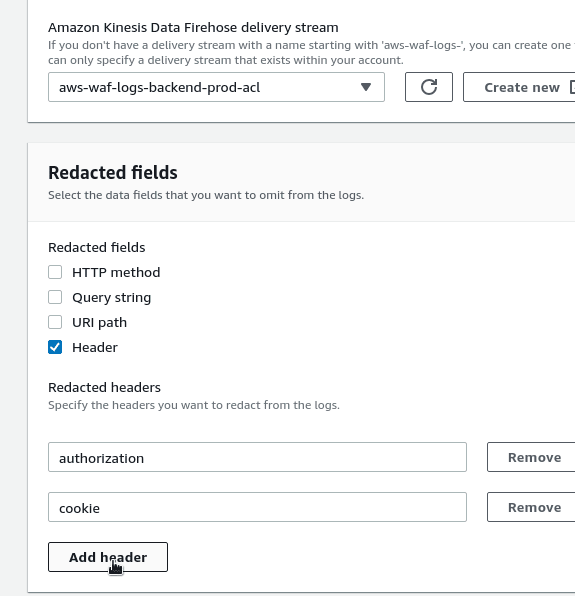

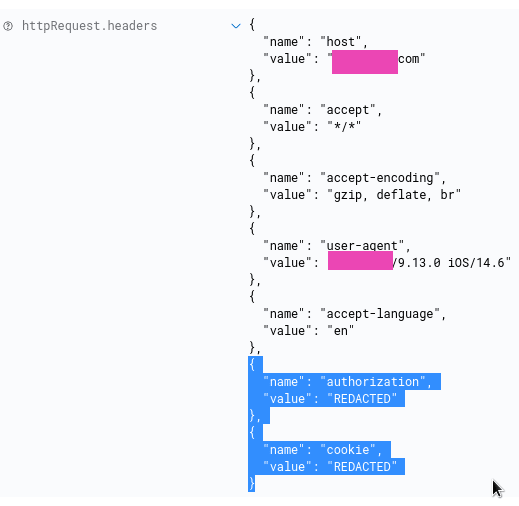

In the log, we can get some data that we don’t want to be there, such as cookies info or authentication tokens:

To remove that data, go to the logging settings, then in the Redacted fields choose fields that need to be excluded.

In that current case, it will be Headers and two fields from there — authorization and cookie:

Apply changes and now you’ll see their values as REDACTED:

Logz.io alerting

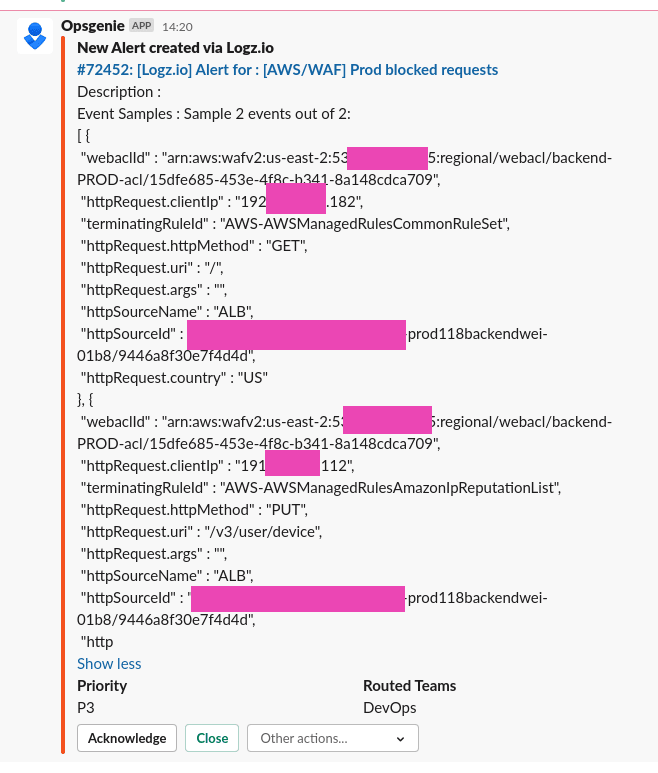

Also, it’s a good idea to get notifications on blocks.

Let’s create an alert via Logz.io integration with Opsgenie, and Opsgenie will send us notifications to our Slack.

Describe an alert’s conditions, in the Filters add action != ALLOW:

Choose a channel to send alerts to, and fields that you’d like to see in a message:

Wait for an alert in Slack:

Done.

Originally published at RTFM: Linux, DevOps, and system administration.

Oldest comments (0)