Hey friends! 👋 Welcome back to #TrendingTuesday

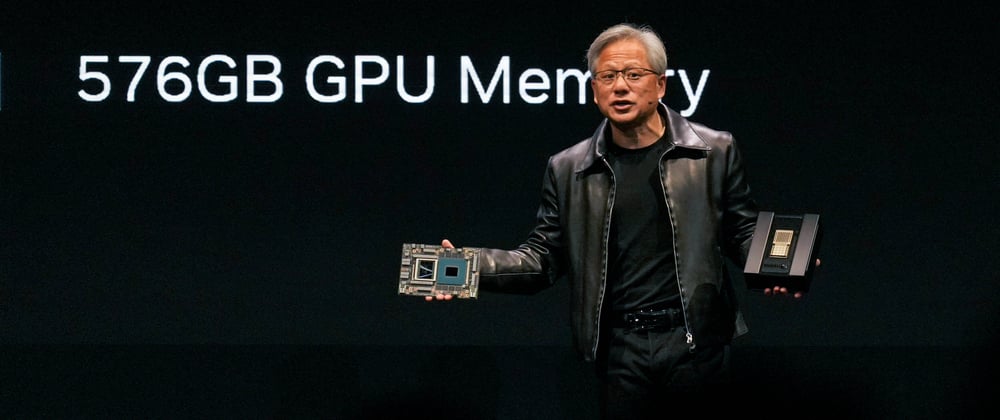

This week, in honour of NVIDIA (the semiconductor giant and company behind CUDA) gaining 200 billion in valuation overnight and on track to be at 1 Trillion in valuation 😵, we'll look into the fastest-growing CUDA repos of the week.

Our weekly trending list takes into account the historical performance of similar repos and the repo's current growth stage. For this reason, you’ll find some gems here that you won’t see anywhere else! 💎

Remember to follow us on Twitter and stay up-to-date with new product announcements! 🐦

🔟 In the tenth place we have GroundingDINO the official implementation of the Grounding Dino paper, a model that combines a Transformer-based detector with a special kind of training called grounded pre-training. The model can understand and detect different objects based on language descriptions and achieved impressive results on multiple evaluation benchmarks. GroundingDINO is now a dependency used by 8 different repositories.

The project has ~78 issues and ~12 contributors.

9️⃣ After a few weeks, bitsandbytes is again on our trending list! 🙌 This repo is a lightweight wrapper around CUDA custom functions simplifying the interaction with CUDA; in particular with 8-bit optimisers1, quantisation functions2 and matrix multiplication (LLM.int8())3.

For further understanding, 1 helps make calculations faster and save memory by using 8-bit values with the same level of performance as the traditional 32-bit optimisers. 2 adjusts metrics to make them slightly less precise and in turn saves memory and makes calculations simpler. 3 is another technique that reduces the memory requirements for large language models without sacrificing precision. 👯♀️

The repo has ~255 issues and ~22 contributors.

This was brought to you by @tim_dettmers!

8️⃣ Next is DABA which is brought to you by no other than Meta Research (@MetaOpenSource)!

Bundle adjustment is a mathematical problem used in computer vision and robotics to improve the accuracy of 3D reconstructions or virtual reality environments. This repo implements Decentralized and Accelerated Bundle Adjustment which addresses the compute and communication bottleneck for bundle adjustment problems of arbitrary scale. 🗼

The repo is the official implementation of this paper.

The project has currently ~1 issue and ~2 contributors.

7️⃣ RAD-NeRF contains a PyTorch implementation of Dynamic Neural Radiance Fields (NeRF) which is a technology used to create detailed 3D models of talking portraits. By breaking down the portrait into smaller parts and using specialised grids for spatial and audio information, they achieved realistic and synchronised results with improved efficiency compared to previous methods. 💪

Pretty neat demonstrations can be found on their webpage!

It has ~37 issues and ~3 contributors.

6️⃣ llmtune is brought to you by the research group at Cornell! The repo allows finetuning LLMs (e.g. the largest 65B LLAMA models) on as little as one consumer-grade GPU. A clear benefit in being able to finetune larger LLMs (e.g., 65B params) on one GPU is the ability in the future to use multiple GPUs together to work on big models! 💻

The project is managed by the Kuleshov Group (@volokuleshov) with @kuleshov as the maintainer. The repo currently has ~12 issues.

5️⃣ Next we have SegmentAnything3D a project extending the original Segment Anything repo from the Meta Research group that produces high-quality object masks from input prompts such as points or boxes.SegmentAnything3D leverages Meta’s Segment Anything Model (SAM) to 3D perception by transferring the segmentation information of 2D images to 3D space.

4️⃣ In number four, we have INSTA brought to you by the Max Planck Institute for Intelligent Systems in Germany (@MPI_IS)!

This is the implementation of Instant Volumetric Head Avatars which aims to create a subject-specific digital head avatar using monocular RGB images (images captured using a single camera as opposed to stereo or multi-view set-up). 🤳

The maintainer for this project is @w_zielonka and the repo has currently ~2 issues.

3️⃣ In the third place, we have exllama a project with a more memory-efficient rewrite of the HF transformers 1 implementation of Llama 2 for use with quantised weights 3.

For further understanding, 1 HF transformers are a user-friendly implementation of powerful transformer models used for language processing tasks like translation and text generation. 2 LLaMA is a collection of powerful language models ranging from 7 billion to 65 billion parameters, that were trained on publicly available datasets. 3 Quantised weights refer to representing numerical values with fewer bits making computations faster and saving memory (slight loss in precision though but you can’t have everything in life 😛).

The repo has ~6 issues.

2️⃣ In second place we have AutoGPTQ, an easy-to-use LLMs quantisation package with user-friendly APIs, based on the GPTQ algorithm. The GPTQ algorithm is a new method that compresses GPT models whilst allowing them to be executed on a single GPU. 🔨

The repo has ~20 issues and ~11 contributors.

1️⃣ The top place goes to Lidar_AI_Solution. This repo contains a highly optimised solution designed for a self-driving car system that uses 3D lidar sensors (3D lidar sensors are light detection and ranging sensors).

This project speeds up various aspects such as sparse convolution (method to analyse data efficiently), CenterPoint (technique used for object detection), BEVFusion (technique for comprehensive understanding of environment), OSD (Object Detection and Segmentation) and data conversion. 🚙

The project is brought by the company that’s been at the centre of attention: NVIDIA (@nvidiadeveloper)! 🚀

The repo has ~7 issues and ~3 contributors.

That’s it for this one! Congrats to all the awesome CUDA projects and teams - you killed it! 💪⚡️

We’ll continue to write about trending repos on different topics and languages so make sure to spread the love by liking and following. Also, do get in touch with us on Discord and Twitter!

📣 shameless promotion: Do you want to contribute to a CUDA or ML project? We indexed the open source ecosystem to help you find the hottest issues in the coolest GitHub repos! Check out Quine - you can thank us later 😌🤗

Top comments (2)

Wow! Very interesting article.

Pleased you like it Gerda!