Serverless

Well, everyone knows what serverless is. But just in case, short reminder. Serverless, in this case, AWS Lambda, allows to run the code without... anything. Well, almost.

AWS Lambda works in PaaS model. Actually, we started to call serverless as... FaaS.

Computing models

Let's deal with PaaS first.

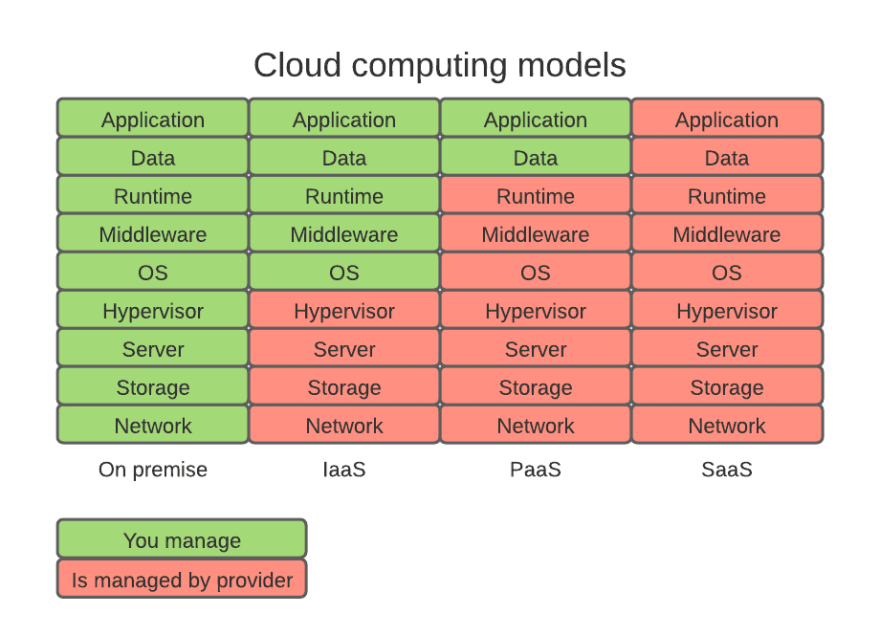

As the image above shows, PaaS is on of three main ways of deliver platform to the clients. On premise is different in the way that you are solely responsible for everything (or almost everything, there may be some agreements related to WAN, air conditioning, power supplies, etc).

IaaS, PaaS and SaaS work with a concept called Shared Responsibility Model. In a few words - this concept explains and set rules and boundaries related to who is responsible for what.

In the picture above, green color means you are responsible, red - the responsibility lies on the provider side. More about Shared Responsibility Model is here.

Now, PaaS model is the one, where Lambda functions operate in AWS. Provider gives the platform - infrastructure including load balancing, security of the infrastructure, scalability, high availability and the runtime*. You are responsible for delivery of data and code.

*) the truth is, we can provide a custom runtime, but it is not the scope of this article.

FaaS

I mentioned FaaS. We started to call Serverless PaaS as... Function as a Service. It started as fun, and now we have to live with that :)

The goal of the first part

In first part of the series we will see, or I should say, we will feel the pain of monitoring of AWS Lambda.

Let's not lure ourselves. AWS Lambda, as well as Serverless everywhere, gives us very limited possibility of doing monitoring in default setup. In this part we will see what we can get by default.

The code

As this series is not about deployment of Lambda, I do not show the deployment, I do not explain the SAM framework, etc. You have to believe me, it works :)

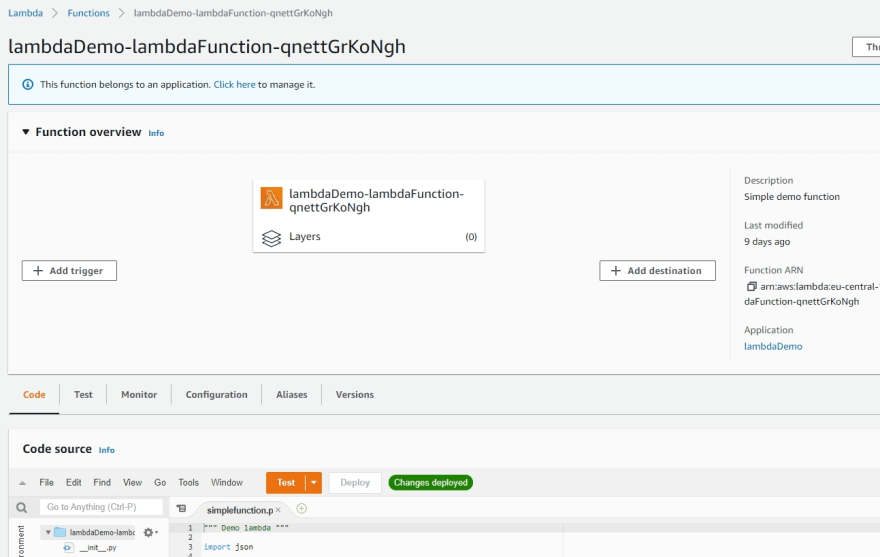

For your curiousity. I deployed simple Lambda function through AWS CodePipeline, using SAM framework.

The whole template is simple, and as we will work on very similar one later, I print it below

AWSTemplateFormatVersion: 2010-09-09

Transform: AWS::Serverless-2016-10-31

Description: Simple Lambda

Resources:

lambdaFunction:

Type: AWS::Serverless::Function

Properties:

Handler: simplefunction.handler

CodeUri: lambdafunction/

Runtime: python3.8

AutoPublishAlias: live

Description: Simple demo function

MemorySize: 128

Timeout: 10

Events:

simpleApi:

Type: Api

Properties:

Path: /

Method: get

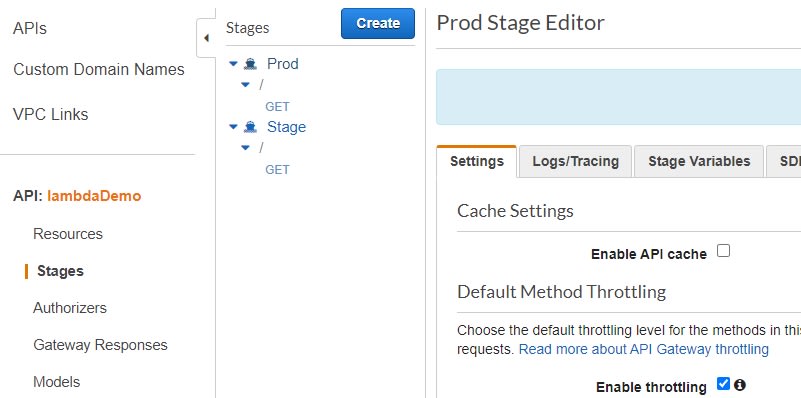

What we have here? Simple Lambda function with API Gateway. API contains only one endpoint /.

Here are two pictures of deployed template:

Ok, let's do some requests.

I simply run the URL, which AWS provides for my API and deployed stage.

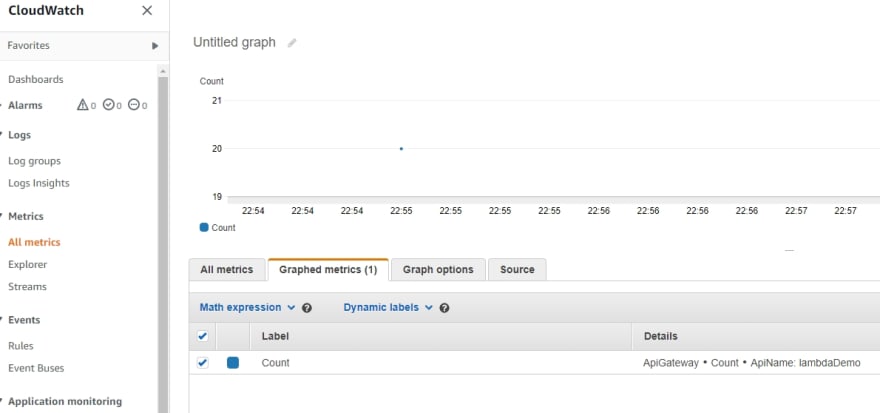

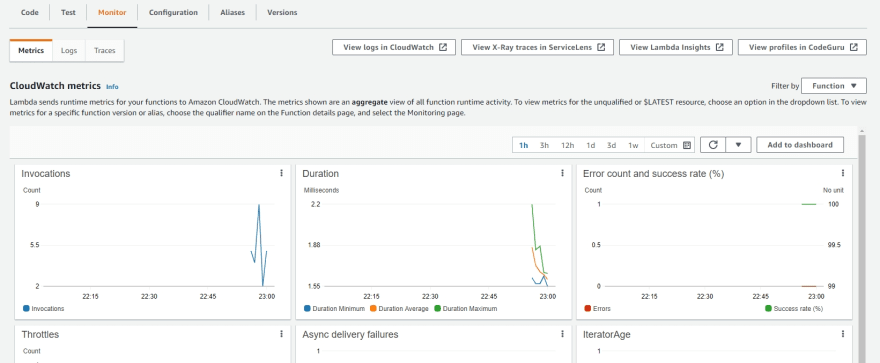

Right, let's see what we can see in metrics

Well, if you feel dissapointed, you should. Let's look into Lambda logs, there must be something!

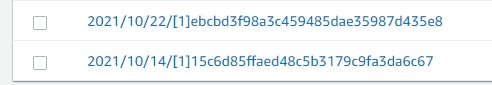

Neat, we are directed to the proper log group! Here we can see another power of SAM template and its deployment. SAM and CodePipeline took care not only for create versions of Lambda, but also to properly show the referenced data:

Did you noticed the version in square brackets?

Ok, let's check the log for one of the invocations.

Oh well... It is far from being useful. I opened the whole three records for my invocation. When you run your Lambda from scratch, you will see even less, because I have the postion XRAY with traceID, I suppose it is because I enabled tracing in SAM temaplte (well, habits).

Three lines. Start request, end and report with duration, memory used, etc. Wow.

Now, I have a simple python function. I can try to add print statements, or even be so boldly sophisticated and create LOGGER. And it will be as much useless as it is now. Why? Please wait for second episode of the series :)

now we will make our Lambda and API ready for X-Ray.

Enable X-Ray

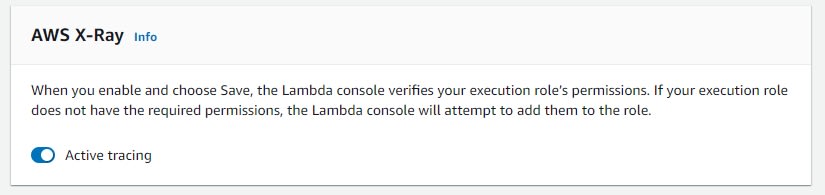

Enable X-Ray in Lambda

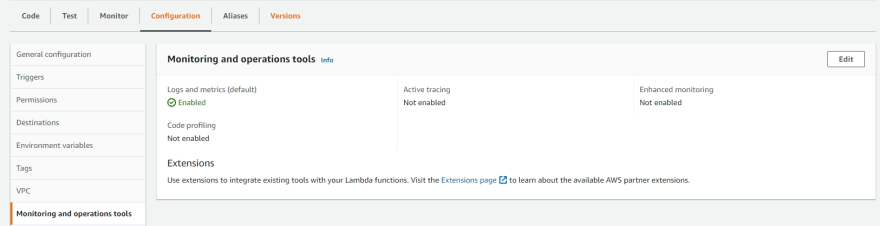

Navigate to your Lambda, Configuration and finally to Monitoring and operations tools.

Click Edit, and tick Active tracing

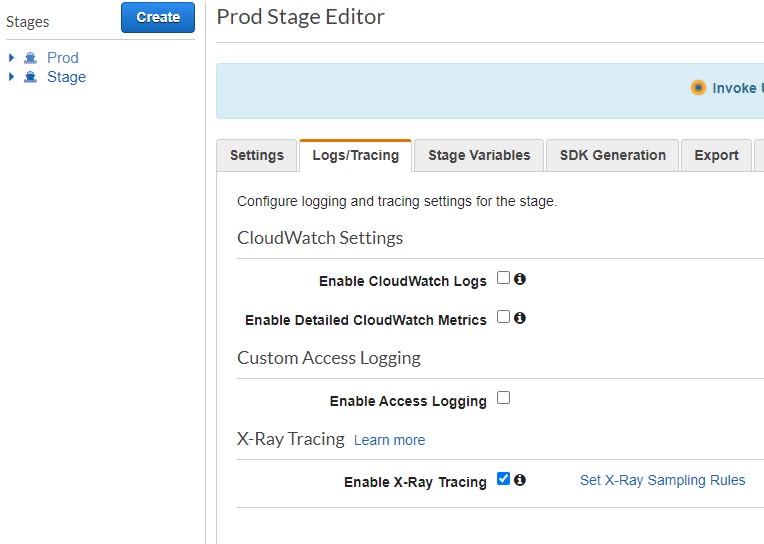

Enable tracing for API

Now it is time to do the same for API.

In API, navigate to your stage, Logs/tracing and tick Enable X-Ray Tracing

You have to wait a short time to allow AWS to collect traces.

AWS will attempt to modify your policies and roles in order to allow the resources to access X-Ray.

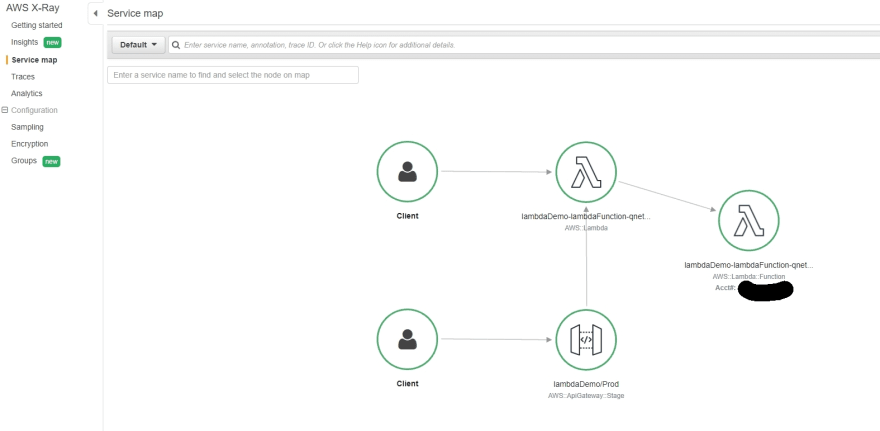

You can go to the X-Ray service, or go to traces directly from Lambda

No, nothing new in logs (maybe only TraceID).

Go to X-Ray service (or through link in Lambda to Service Lens), and behold! The new world is here!

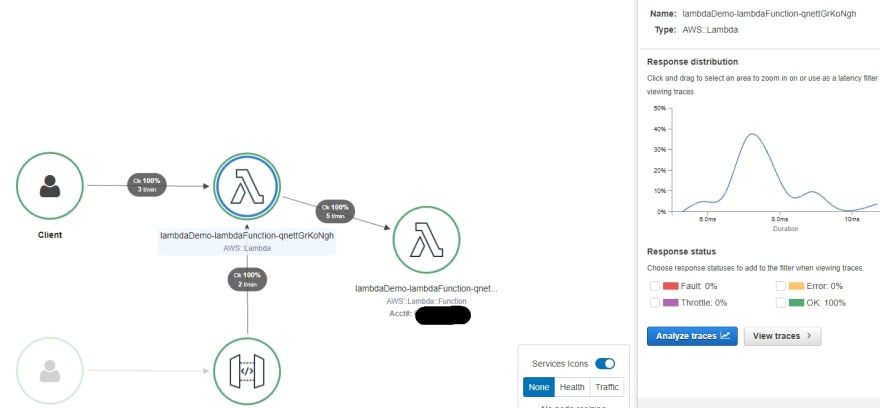

Click on first circle for Lambda. You will see the response distribution. How great is that!

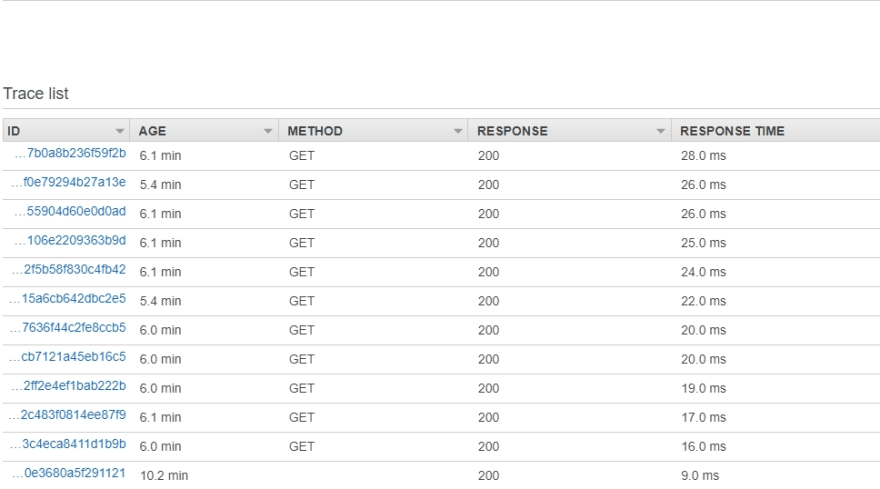

Ok, so... we start to be smarter and smarter! We start too really see, what is going on inside our system. Let's go to traces and check some.

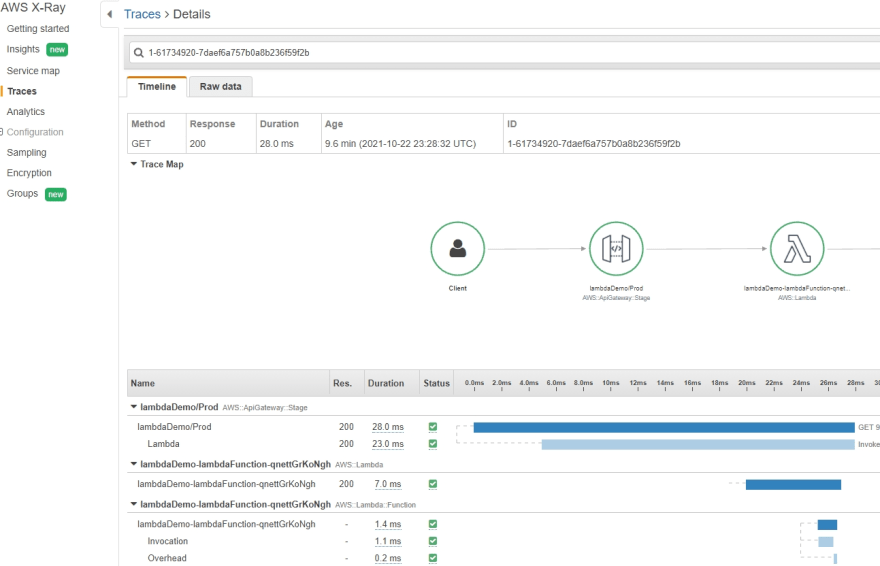

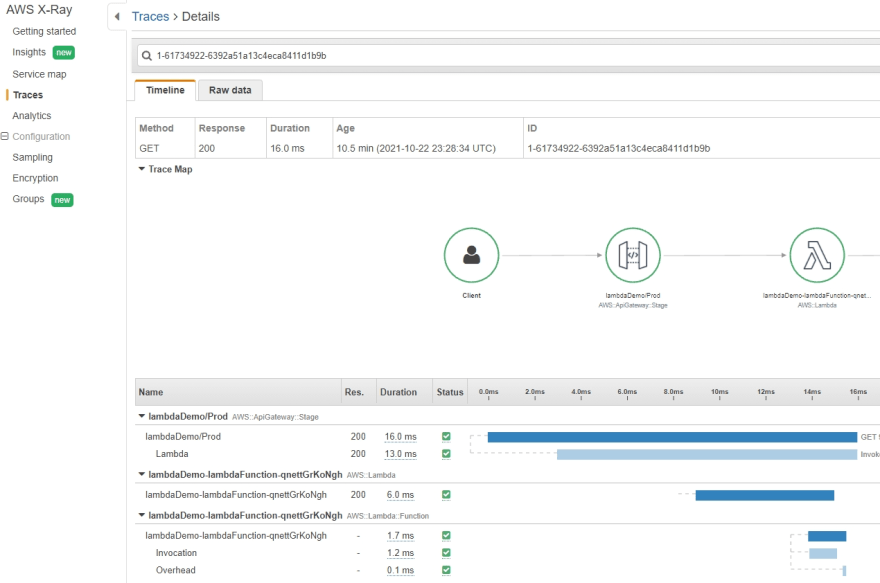

Hmm. Ok, Now you can say "I see more than before, but what it means?". I show you two traces. The longest and the shortest. Both did the same. Both were executed in the same way. One took 28ms, second 16. It is almost twice more for the first request! What is going on?

Probably you know already, it is caused by something called cold start. I know that, some of you know that. And we can extrapolate these metrics to see it. But we don't really know, how much this coldstart took. How much invocations suffered by it. Yes, we can create metrics, count it manually, or automatically. But, hey! We have tools for it!

I will introduce it in the next episode!

What is cold start? It is the time needed for Provider to provide (spin-up and provision) needed resources for your code. Depends on your needs, on runtime, your code base (language), size and number of dependencies, and a few more elements, this time will differ.

The best, shortest cold start times are for languages like Python, NodeJS. The worst for Java, .Net. Although last years this was significantly improved.

Another important part is, if you attempt to access other resources, the time needed for it will be longer if cold start was present. It is the time needed for establishing the connection, etc. Sometimes it may be significant and disruptive. You have to remember about it!

One more thing. If you wish to enable tracing in SAM template, this is what you have to add to properties section of the Lambda:

Tracing: Active

and

TracingEnabled: true

to the event (API) section.

Top comments (0)