In the Enterprise Database Security session I presented at Aerospike Summit 2020 I gave an overview of data protection with Aerospike Enterprise.

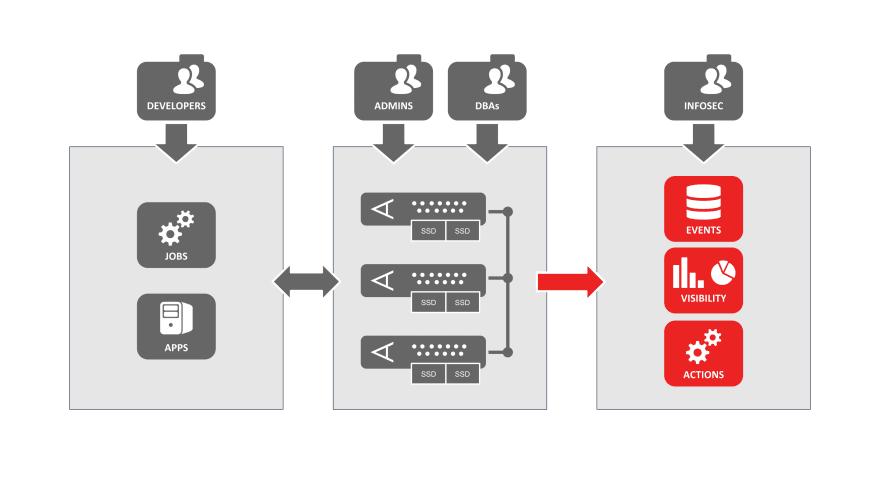

To provide context, refer to the following diagram depicting an Aerospike deployment.

Once all the enterprise security fetures have been implemented, how do we verify we’re doing any of this right? How do we get visibility into what’s happened in the past and how do we respond to events as they happen in real time?

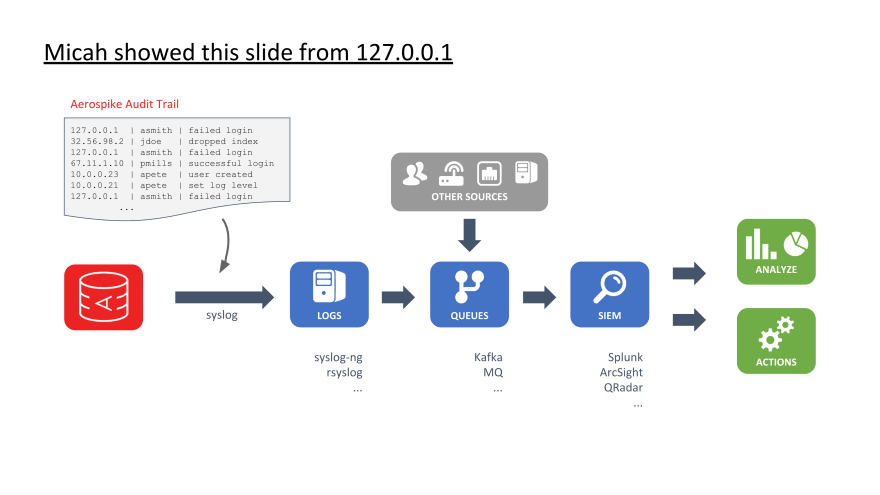

Security Event Architecture

The diagram below is depicting an Aerospike node on the left producing a security audit trail and shipping that to a downstream system via syslog. The rest of this diagram is just one of many types of architectures for consuming Aerospike audit logs. I’ll talk this one through to give you an idea of what’s happening.

First, the audit trail from Aerospike is separate from the standard server logs used for troubleshooting and analysis. It includes events that are relevant for security monitoring such as authentication events, user administration, system administration, etc.

The audit trail is shipped using the syslog protocol to some type of log collection like syslog-ng, rsyslog, the Elastic stack, Splunk agents, etc.

There is often a highly scalable queuing system, something like Kafka, in between the log producers and collectors and the downstream consumers. This avoids tight coupling between the systems allowing producing and consuming at different rates and independently maintaining system components.

There is also typically events and data from other sources being brought in and then ingested by the SIEM and/or log analysis platforms. It is in these platforms that all of this security data can be monitored in real time to detect and respond to potential security threats or data breaches. In addition to the real-time monitoring, this also provides security professionals with historical data to use for forensics, audits, training new M/L models, etc.

And one final note about the Aerospike audit trail is that what events are logged is configurable. It is possible to ship every single data operation, including all reads and writes. In this way which application or user made what change at what time is auditable. This is a very common requirement from the data privacy and compliance side.

However, I often find that enterprises make exceptions when the scale becomes impractical for the downstream systems. Imagine an Aerospike cluster handling tens of millions of operations per second in a very cost effective way, and then shipping all those events to a downstream system that isn’t designed to scale, doesn’t scale linearly, can’t handle the real-time ingestion, or isn’t cost effective at scale. This comes down to a risk-based business decision and in many cases organizations rely on compensating controls in the application and system access controls to achieve the auditability of data access that they require.

Latest comments (0)