Welcome back to my another article. Here I will share you an idea how you can train & deploy your machine learning model inside docker container. So let's jumped directly on the installation part of it.

Docker Installation

For installing docker, I'm using AWS instance but you can use any OS according to your desire. So if you're also going the same then just launch your instance and follow the mentioned steps.

Note : If you are going to use RHEL8 then you have to run below script because RHEL8 doesn't contain docker software by default But for other one you can skip it.

cat <<EOF >> /etc/yum.repos.d/docker.repo

[docker]

baseurl=https://download.docker.com/linux/centos/7/x86_64/stable/

gpgcheck=0

EOF

Now you can move ahead towards the installing so run the below command.

yum install docker -y

In case of RHEL8 you have to run below command to install docker. The above command doesn't work for RHEL8.

yum install docker-ce --nobest -y

Here docker-ce is the software name and nobest is for comfortable version of it.

Let's check its version.

[root@dockerhost ~]# docker --version

Docker version 20.10.4, build d3cb89e

Now start all the services of Docker through the below command.

systemctl enable --now docker

and check its status with below command and you will get output something like this.

[root@dockerhost ~]# systemctl status docker

● docker.service - Docker Application Container Engine

Loaded: loaded (/usr/lib/systemd/system/docker.service; enabled; vendor preset: disabled)

Active: active (running) since Fri 2021-05-28 03:02:43 UTC; 5min ago

Docs: https://docs.docker.com

Process: 4168 ExecStartPre=/usr/libexec/docker/docker-setup-runtimes.sh (code=exited, status=0/SUCCESS)

Process: 4158 ExecStartPre=/bin/mkdir -p /run/docker (code=exited, status=0/SUCCESS)

Main PID: 4173 (dockerd)

Tasks: 7

Memory: 37.6M

CGroup: /system.slice/docker.service

└─4173 /usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock --default-ulimit nofile=1024:4...

May 28 03:02:42 dockerhost dockerd[4173]: time="2021-05-28T03:02:42.986402644Z" level=info msg="scheme \"unix\" no...=grpc

May 28 03:02:42 dockerhost dockerd[4173]: time="2021-05-28T03:02:42.986690523Z" level=info msg="ccResolverWrapper:...=grpc

May 28 03:02:42 dockerhost dockerd[4173]: time="2021-05-28T03:02:42.986968399Z" level=info msg="ClientConn switchi...=grpc

May 28 03:02:43 dockerhost dockerd[4173]: time="2021-05-28T03:02:43.033773756Z" level=info msg="Loading containers...art."

May 28 03:02:43 dockerhost dockerd[4173]: time="2021-05-28T03:02:43.193735930Z" level=info msg="Default bridge (do...ress"

May 28 03:02:43 dockerhost dockerd[4173]: time="2021-05-28T03:02:43.246294570Z" level=info msg="Loading containers: done."

May 28 03:02:43 dockerhost dockerd[4173]: time="2021-05-28T03:02:43.260680982Z" level=info msg="Docker daemon" com....10.4

May 28 03:02:43 dockerhost dockerd[4173]: time="2021-05-28T03:02:43.261199789Z" level=info msg="Daemon has complet...tion"

May 28 03:02:43 dockerhost systemd[1]: Started Docker Application Container Engine.

May 28 03:02:43 dockerhost dockerd[4173]: time="2021-05-28T03:02:43.283719170Z" level=info msg="API listen on /run...sock"

Hint: Some lines were ellipsized, use -l to show in full.

Note: In 4th line of above output, you can see that docker has running state.

Now we can move towards deployment of ML Model. So let's pull a centos docker image for building own docker image top of it.

docker pull centos:latest

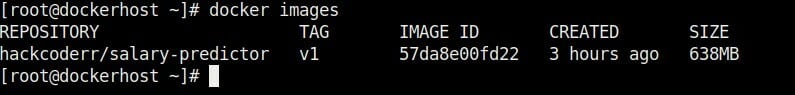

To check the images, run the below command, you can see your all the docker images like this.

[root@dockerhost ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

centos latest 300e315adb2f 5 months ago 209MB

Train a ML Model

Now you have two approach to deploy a ml model inside container. First one is that we can go through a manual approach and second one is that you can build an own image with your trained ml model. So I am going with second approach. let's see how can deploy.

Now train your ml model with jupyter or colab So I have written some code of lines to train a linear Regression model. So you can get an idea from here and deploy your desired model.

import pandas as pd

import numpy as np

from sklearn.linear_model import LinearRegression

import joblib

dataset= pd.read_csv('/content/salary.csv')

Y = dataset["Salary"]

X = dataset["YearsExperience"]

X = np.array(X).reshape(30,1)

Y = np.array(Y).reshape(30,1)

mind = LinearRegression()

mind.fit(X,Y)

print("Weight Is :",mind.coef_)

print("Bias Is : ",mind.intercept_)

joblib.dump(mind,"model_salary_predict.pk1")

In the above code, joblib function is used to save this model in the form of file so that we can use it in the prediction application. So let's see how I will use it.

Now create a user interactive file so that after typing the value from user end, this application can show the output to client. So I built a basic template to client and used model_salary_predict.pk1 file which I saved my model inside it.

print("---------------------------------------------------------------------")

print(" Model:Salary Prediction from year of experience ")

print("---------------------------------------------------------------------")

exp=input("What is the experience: ")

exp=float(exp)

mind=jb.load('model_salary_predict.pk1')

salary = mind.predict([[exp]])

print("Salary will be:",salary)

Now ML Model is ready to use so let save these codes with .py extention.

Build a Dockerfile.

Before building docker image copy your model's files inside your docker system. So you can use scp command for copying them inside docker system.

sudo scp -i <key.pem> <files_location> username@ip:

After copying these file inside docker system, output will look like that:

Now time is to create own Dockerfile with our ml trained Model. So now again visit your docker system and create it.

So, create a Dockerfile with your any favourite editor and write the below code.

FROM centos:latest

RUN yum install python3 vim ncurses -y && \

python3 -m pip install --upgrade --force-reinstall pip

RUN pip install pandas scikit-learn

RUN mkdir ml

COPY linearregression.py salary.csv salary_predictor.py ml/

After writing the code, just run docker build command.

docker run -t <containername>:<version> .

After running this command, output will look like that:

Now everything is ready to work so let's see.

launch a Docker container

So now you can launch container with your image which you built previously.

Launch the docker container.

docker run -it --name <conatinername> <imagename>:<version>

Output should look like that:

Now you are inside your docker container, so let's see our ml model.

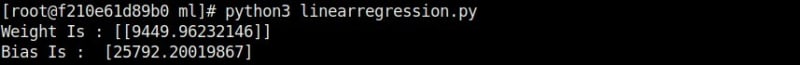

After going ml directory run the ml model with python3.

python3 linearregression.py

Now let's see prediction

Hopefully, you enjoy it.

Conclustion

Here I have tried to give an idea how you can deploy your machine learning model inside docker container and enjoy the power of containerization.

But if you any doubt then feel free to do the comment in the comment section.

Top comments (0)