In this article we cover what is a memory leak, what causes a memory leak, and how it handles in python, additionally, we see the benefits of using python in terms of Memory leak

Memory Leak:

The memory leak is a problem, when memory creates for any variable, references, objects and forgot to delete it, later they create an issue to programs like daemons and servers which never terminate.

When a program is running, it gathers memory from RAM independent of language but depends on the computer and OS architecture being used in a system.

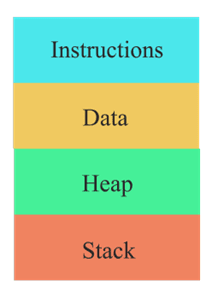

Program Memory divided into 4 types as shown in image 1.1

• The first one is Instruction to keep the instructions provided via our code.

• Next one is Data which stores the variable may be local or global.

• Third one is important i.e., Stack which is used in the running time e.g., executing methods/function, loop, recursions, etc.

• The Last one is heap memory which allocates the memory for dynamic allocations such as malloc, calloc, or reassigning the values.

The Instructions and Data blocks consume a fixed amount of memory at the start of the execution of the program. But Stack and Heap's blocks vary in terms of memory consumption.

During a program life cycle there will be frequent writes and wipes in memory such as calling the functions, executing the loops, opening the files, redirecting the pages, and many more.

In primitive languages like C and java, there are 2 statuses namely Consumed and Free, suppose we created one variable certain memory will be allocated. while allocating memory, the interpreter indicates the variable is in consumed status, once the program executed successfully free up the memory for that variable, by assigning the free status for that variable. At this point no memory wastage, but due to some problem or some reason, the memory for that variable was not free so it causes the memory leak problem.

To rectify and fix them we need the to measure memory used by each piece of code i.e., memory profiling.

Memory leak in Python:

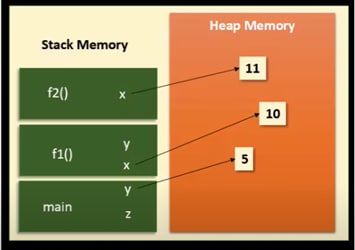

In Python everything is an object, python frequently uses the 2 sub memory blocks, Heap Memory and Stack Memory to store the function, data, variables, and objects, etc.

Stack memory is used to store the methods, variables, and Heap memory used to store the actual objects (e.g., integer values), by default, the program executes from the main method.

def f2(x):

x = x + 1

return x

def f1(x):

x = x*2

y = f2(x)

return y

if __name__ == '__main__':

y = 5

z = f1(y)

Concerning the example, we show as diagrammatically

Here, as per the rule main method start to execute first and allocate memory for that in stack space along with variables that are present in the main method, i.e., y = 5, so 5 is the object stored in the heap memory and reference stored at y position in stack space.

Later it calls the f1() function so another memory block will create in the stack and x variable created with a new object that goes to heap space same for other functions, 3 methods are called and memory gets allocated for each of them along with references for the objects, note that there is variable x in both functions f1() and f2() however they do not override the values of each other since there placed in different stack place.

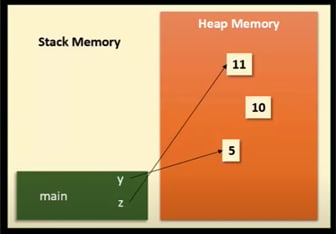

On completion of the f2() function return value is handed over to y and remove the references of all the variables and remove the memory block from stack space.

The same happens when f1() returns, note that there is no variable referring to 10, so this is termed as a memory leak.

will see what happened in python if no variable point to any object, how we can delete that!!

Approaches to remove the memory leaks:

Let us take the same example when the program executes, the interpreter maintains the table where it keeps the track of object and number of references.

| Object | Number of references |

|---|---|

| 5 | 1 |

| 10 | 1 |

| 11 | 1 |

Table:1.0 when the program executes all the functions.

| Object | Number of references |

|---|---|

| 5 | 1 |

| 10 | 1 |

| 11 | 1 |

Table:1.1 when f2() finish.

| Object | Number of references |

|---|---|

| 5 | 1 |

| 10 | 0 (Dead Object) |

| 11 | 1 |

Table:1.2 when all(main, f1(), f2()) function finish.

As per table 1.0 and 1.1, all the 3 objects have a reference but after f1() finish the execution, object 10 has 0 references that mean the program not using object 10 anymore, and it's also called a Dead object.

Python has an internal mechanism called Garbage Collector (GC), it runs as soon as the reference count becomes 0, it removes the dead object from memory and frees up that block. The algorithm used for Garbage Collection is called Reference Counting.

Code Snippets:

import weakref

def f2(x):

x = x + 1

return x

def f1(x):

x = x*2

y = f2(x)

return y

if __name__ == '__main__':

y = 5

z = f1(y)

print("Memory location", id(f1))

r = weakref.ref(f1)

print(r)

f1 = None

print(r)

Memory location 2072240612264

<weakref at 0x000001E27B25B138; to 'function' at 0x000001E27B2A63A8 (f1)>

<weakref at 0x000001E27B25B138; dead>

Here, I modify code to get or retrieve the references of the f2() function, So removed the reference of f2() by assigning it to None then after that f2() is in a dead object as result, so the garbage collector removes the memory for that automatically.

But if we talk about practical terms then it is not as easy as it seems. As sometimes, Garbage collectors fail to check on unreferenced objects, leading to memory leaks in Python. Eventually, python programs run out of memory because it gets filled by memory leaks. It becomes a challenge to find memory leaks in python and then to cure them.

Thus, we can say that a memory leak occurs in python when the unused data is heaped up and the programmer forgets to delete it. To diagnose such memory leaks from python, we have to perform a process of memory profiling whereby we can measure the memory used by each part of the code

Don’t panic with the word Memory profiling as basic memory profiling is quite easy.

The causes of memory leaks in Python:

- To linger all the large objects which have not been released

- Reference cycles in the code can also cause memory leaks.

- Sometimes underlying libraries can also cause memory leaks.

Debug:

Firstly you can debug the memory usage through the garbage collector(GC) built-in module. It will list out all the objects which are known by the Garbage collector. It will help you to find out where the whole memory is being used. And then you can filter it according to their use. If the objects are not in use even if it is referenced. Then you can delete them to prevent memory leaks in Python.

It will print out all the objects and data created during the execution process. But GC in the built module has a restriction that does not state how objects are being allocated. So ultimately it won’t help you to find out to identify the code responsible for the allocation of the objects which are causing memory leaks

Tracemalloc:

The best feature of Python is the new Tracemalloc built-in module. As it is considered as the most suitable to the problem of memory leaks in Python. It will help you to connect an object with the place it was first allocated.

It has a stack trace which will help you to find out which particular use of a common function is consuming memory in the program. Tracemalloc allows you to have a track of the memory usage of an object. Ultimately you can figure out the causes of Memory leaks in Python. So if you know which objects are causing memory leaks you fix them or clear them.

It will efficiently reduce the footprints of memory in the program. That is why Tracemalloc is known as the powerful memory tracker method to reduce memory leaks in Python.

To trace most memory blocks allocated by Python, the module should be started as early as possible by setting the PYTHONTRACEMALLOC environment variable to 1, or by using the -X tracemalloc command-line option. The tracemalloc.start() function can be called at runtime to start tracing Python memory allocations.

Examples:

1) Display the 10 files allocating the most memory

import tracemalloc

tracemalloc.start()

# ... run your application ...

snapshot = tracemalloc.take_snapshot()

top_stats = snapshot.statistics('lineno')

print("[ Top 10 ]")

for stat in top_stats[:10]:

print(stat)

[ Top 10 ]

<frozen importlib._bootstrap>:716: size=4855 KiB, count=39328, average=126 B

<frozen importlib._bootstrap>:284: size=521 KiB, count=3199, average=167 B

/usr/lib/python3.4/collections/__init__.py:368: size=244 KiB, count=2315, average=108 B

/usr/lib/python3.4/unittest/case.py:381: size=185 KiB, count=779, average=243 B

/usr/lib/python3.4/unittest/case.py:402: size=154 KiB, count=378, average=416 B

/usr/lib/python3.4/abc.py:133: size=88.7 KiB, count=347, average=262 B

<frozen importlib._bootstrap>:1446: size=70.4 KiB, count=911, average=79 B

<frozen importlib._bootstrap>:1454: size=52.0 KiB, count=25, average=2131 B

<string>:5: size=49.7 KiB, count=148, average=344 B

/usr/lib/python3.4/sysconfig.py:411: size=48.0 KiB, count=1, average=48.0 KiB

2) Code to display the traceback of the biggest memory block:

import tracemalloc

# Store 25 frames

tracemalloc.start(25)

# ... run your application ...

snapshot = tracemalloc.take_snapshot()

top_stats = snapshot.statistics('traceback')

# pick the biggest memory block

stat = top_stats[0]

print("%s memory blocks: %.1f KiB" % (stat.count, stat.size / 1024))

for line in stat.traceback.format():

print(line)

903 memory blocks: 870.1 KiB

File "<frozen importlib._bootstrap>", line 716

File "<frozen importlib._bootstrap>", line 1036

File "<frozen importlib._bootstrap>", line 934

File "<frozen importlib._bootstrap>", line 1068

File "<frozen importlib._bootstrap>", line 619

File "<frozen importlib._bootstrap>", line 1581

File "<frozen importlib._bootstrap>", line 1614

File "/usr/lib/python3.4/doctest.py", line 101

import pdb

File "<frozen importlib._bootstrap>", line 284

File "<frozen importlib._bootstrap>", line 938

File "<frozen importlib._bootstrap>", line 1068

File "<frozen importlib._bootstrap>", line 619

File "<frozen importlib._bootstrap>", line 1581

File "<frozen importlib._bootstrap>", line 1614

File "/usr/lib/python3.4/test/support/__init__.py", line 1728

import doctest

File "/usr/lib/python3.4/test/test_pickletools.py", line 21

support.run_doctest(pickletools)

File "/usr/lib/python3.4/test/regrtest.py", line 1276

test_runner()

File "/usr/lib/python3.4/test/regrtest.py", line 976

display_failure=not verbose)

File "/usr/lib/python3.4/test/regrtest.py", line 761

match_tests=ns.match_tests)

File "/usr/lib/python3.4/test/regrtest.py", line 1563

main()

File "/usr/lib/python3.4/test/__main__.py", line 3

regrtest.main_in_temp_cwd()

File "/usr/lib/python3.4/runpy.py", line 73

exec(code, run_globals)

File "/usr/lib/python3.4/runpy.py", line 160

"__main__", fname, loader, pkg_name)

Example of output of the Python test suite (traceback limited to 25 frames)

We can see that the most memory was allocated in the importlib module to load data (bytecode and constants) from modules: 870.1 KiB. The traceback is where the importlib loaded data most recently: on the import pdb line of the doctest module. The traceback may change if a new module is loaded.

Pros & Cons:

-

Pros:

- Optimal memory Utilization

- Very quick and fast

-

Cons:

- Slow down the speed of the execution due to frequent invocation of the GC.

Conclusion:

Python is one of the best object-oriented programming languages in the world. It is used by many large companies for their many projects like Google, YouTube, etc. It is known for its efficiency. But it is also subject to memory leaks like other programs. CPython in python helps to allocate and deallocate the memory in Python.

But sometimes certain objects are left unresolved even if they are unreferenced for a long time. This is where the Memory leaks occur in Python. Therefore it has become a challenge for all the programmers and developers to resolve. Eventually, they have found certain ways to deal with this issue as above mentioned.

References:

https://docs.python.org/3/library/tracemalloc.html

https://benbernardblog.com/tracking-down-a-freaky-python-memory-leak/

https://stackify.com/top-5-python-memory-profilers/

https://docs.python.org/3/using/cmdline.html#envvar-PYTHONTRACEMALLOC

https://statanalytica.com/blog/memory-leaks-in-python/

Disclaimer: This is a personal [blog, post, statement, opinion]. The views and opinions expressed here are only those of the author and do not represent those of any organization or any individual with whom the author may be associated, professionally or personally.

Top comments (0)