This article aims to gather known problems on DNS protocol and architecture. The idea is to give an update on the state of the security level

I’m not going to remind you what the DNS protocol and architecture is, there are a ton of sites perfectly suited for that. So I select the different points that are important for this article.

Initially the DNS architecture and protocol allowed to transpose a human-readable name into an Internet address (IP) and vice versa with ARPA . Previously, the /etc/hosts file was used to perform this transposition. This technique remains the safest from a security point of view but is totally unmanageable operationally especially with the development of the Web.

Time has passed and finally DNS has become a bit the “center of trust” as verification operations are now performed on the basis of domain names. The Web is well informed of what is allowed to send emails under my domain with SPF, Google checks if you are the holder of a domain like letsencrypt with ACME. Globally we attach cryptographic keys, hash and routing information (zone) related to the domain. This is a good thing and it was important to note this because more and more is being asked of DNS without giving it the security features it needs to serve such important data.

Local Interception

This is the first real DNS problem. Basically, it uses an unreliable transport protocol (UDP) and does not use encryption or signatures. This makes the protocol vulnerable to the MITM interception attack by proxy or simply sniffing.

It is possible to listen and modify the data without the user or the authoritative server gets notified. The only constraint is to already have a physical access on the connection.

In this interception mode it is possible to hijack the destinations of DNS queries and hijack websites, email servers, rdp… Of course it is also possible to profile users.

Remote Interception

The remote interception consists in hijacking the connection of a domain example.com by imposing a false data in the cache of the resolvers who will then redistribute it to users. It is a combination of 2 weaknesses in DNS that allows to achieve this feat.

The first one comes from the use of UDP which is an unreliable transport protocol and offers the possibility for attackers to spoof a DNS response with false data. Thus the cache of the servers is intrinsically affected because if the data can be bad at the input then it will be bad.

This is a particularly serious problem since an interception can be carried out without being physically in the middle. In addition, this is still a problem because DNSSEC is struggling because many DNS installations are simply forgotten.

This publication details very well the DNS attack vectors

DDoS

We finally get there, it is certainly the most common attack. First of all it is important to understand that in our case the DNS architecture and protocol are hijacked to attack a target. It is not DNS that is attacked but it becomes the weapon > DNS Amplification.

There are several ways to conduct a DNS amplification attack but the general concept is that name servers respond to short request packets with very long packets in some cases. A 60-byte request can in some cases cause a response of more than 3,000 bytes. There is therefore a gain factor of more than 50. This response is directed to the victim’s IP address via a spoofed IP address.

The EDNS extension allows to limit this type of attack by forcing the client to send its request with a reliable protocol such as TCP when the response is greater than 512 bytes.

It is particularly difficult to trace this type of attack because a lot of information is usurped and it is impossible to be sure of the origin of the attack without having access to a mass of data.

DNSSEC

Fortunately, DNSSEC will be more complex to interpret since signed responses are necessarily larger than unsigned ones. Thus DNSSEC needs EDNS0 indicating that the packet must be transmitted over TCP because the response exceeds 512 bytes.

In itself, DNSSEC is not designed to prevent the risks of interception by the transport layer (UDP/TCP) and we will see this right afterwards.

DNSSEC: The Chain Of Trust

Even if it is true that DNSSEC prevents predictive attacks such as cache poisoning or amplification, it is still possible to attack with a MITM attack model where it will be easier to reconstruct the chain of trust from its genesis.

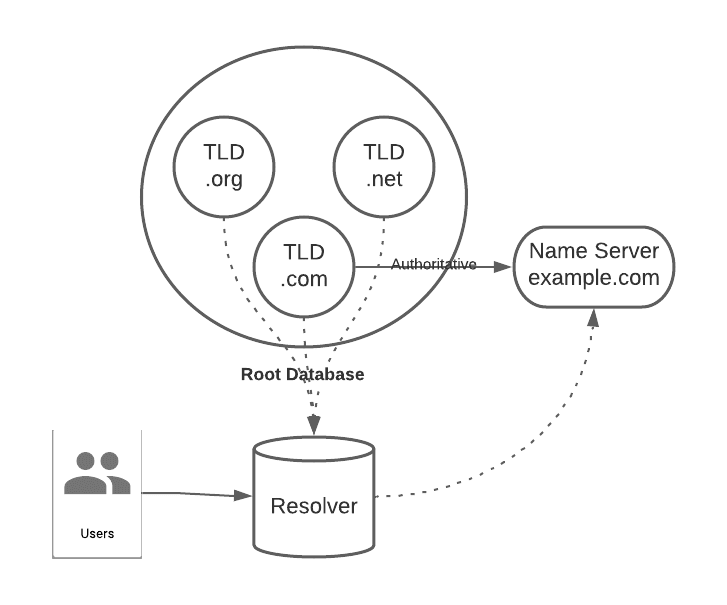

DNSSEC does not attach any proof of trust to the root information. Basically it will be complex to intercept example.com but it will be easier to intercept the .com TLD (Top Level Domain) and thus rebuild the example.com chain.

In my jargon, I call this a rebuild attack. The idea is to rebuild all the chains and not just one link. This problem comes from the fact that the customer does not have a trust point, which I usually call the Trust Boot. Usually it is similar to a public key that is inserted (hardcode) in the client software as a primary block or genesis block. This public key will sign the first ascendants and will allow to get an initial trust and annihilate the possibility to rebuild the chain.

What I describe is relatively simple, but in reality these operations are quite complex to manage. Typically in Bitcoin it is because everyone believes in the genesis block that the protocol is technically (and cryptographically) reliable on an unreliable network.

I don’t know the reason why developers and researchers did not integrate a Trust Boot into DNSSEC. But there could be several reasons:

- Technically complicated to manage (especially in case of compromise), it is not a (network) blockchain

- Complexity to implement: some people will ask to have their public key enrolled.

- Political problem: this gives great power to this genesis block.

DNSSEC: Replay & timing issues

The replay attack consists in reusing data issued and signed by the authority. Wikipedia has an interesting article on the subject. You should know that in DNSSEC it is the person who edits the zone who will sign it, his private key is (usually) not stored on the Name Server. Thus it is not possible to get from him an Authoritative Time Stapling which allows (roughly) to inform the network which zone to use because it is the editor’s key which must be used for that. There is article on the Web about solution to apply against replay.

TLS/SSL has integrated a similar approach in its revocation mechanism called OCSP Stapling in order to ensure the non-repudiation of a certificate. This technique has a major advantage for user confidentiality during client/server communication.

On the DNS side, leaving the zone editor’s key on the servers would expose the zones to too much hacking and since replay is possible, once stolen, the key can make real-false zones without even being detectable.

Thus the zone is signed once and for all and therefore nobody is able to prove the completeness of the zones and therefore the one used.

Exploiting this vulnerability is nevertheless complex because it requires several factors to make it interesting. First of all, signed and validated zones are needed. Unless you steal the editor’s key, you will have to record all the zones broadcast by the server and present the one of interest to the attacker in a MITM. And it’s not easy to get a so-called interesting zone to complete an attack.

I don’t know if we can consider this attack as very practical and so I will classify this vulnerability as theoretical or minor. However, from a purely crypto point of view it is a break in the cryptographic chain.

Architecture

The general architecture of DNS is aging. This is mainly because it has only evolved technically to a very limited extent, while needs and capacities are increasing. The power of Internet and computer connections make the architecture less secure. Thus DDoS directed to root servers can have significant effects on the use of DNS by users and therefore of the Internet.

Should I use DNSSEC

Imperatively and immediately, it is in itself a perfect solution to the poisoning problem that is particularly vicious in DNS. However, DNS evolutions do not offer a big breakthrough in local interception, but they regulate remote interception quite well.

Finally, DNS suffers from its own installations. There are a large number of DNS servers on the Internet that are absolutely not up to date and which go from missing implementations (like DNSSEC) to unpatched security bugs.

A radical solution is needed.

Top comments (0)